News

Read the latest news on extended reality and immersive media research coming out of UMD.

2026

International Delegation of SelectUSA Business Leaders Visits UMD

May 15, 2026

The visit, aimed at bringing foreign investment to the United States, included virtual reality demonstrations from the University of Maryland Institute for Health Computing.

On April 30, 2026, more than 30 visitors from around the world joined researchers from the University of Maryland Institute for Health Computing (UM-IHC) inside the HoloCamera studio, where 300 cameras are used to create virtual reality scenarios that enable viewers to “step into” a scene and view it from all angles.

Staff of the UM-IHC demonstrated three virtual modules: a “digital double” of an animatronic heart, an interactive human anatomical model complete with animated organs, and a 3D training simulation representing a common stroke scenario created for physician assistant students.

The demonstrations “made a clear impression about the university’s cutting-edge research in imaging, immersive media and next-generation visualization technologies,” said Sammy Popat, the University of Maryland’s innovation and entrepreneurship ecosystem catalyst. “We were so pleased that the delegation got to witness these UM-IHC capabilities.”

The group—including business leaders from South Korea, Myanmar, Brazil, Bangladesh, Vietnam, Kenya, and Bulgaria—was a delegation of SelectUSA, a national forum led by the U.S. Department of Commerce dedicated to promoting foreign direct investment in the United States.

“For most of the visitors, it was the first time they’d seen what this type of technology can do,” said Sujal Bista, who directs UM-IHC’s immersive visualization research. “It was very exciting to introduce them to our work and see their positive reactions.”

The delegation visited ahead of SelectUSA’s Investment Summit—held in Washington, D.C., this year—and also stopped in UMD’s Discovery District.

“Events like this highlight our deep commitment to fostering collaborations among academia, industry and international partners,” said Amitabh Varshney, dean of the College of Computer, Mathematical, and Natural Sciences and a professor of computer science at UMD. “It is these partnerships that drive innovation, create economic opportunity and position Maryland as a premier destination for tech-based founders and investors.”

Adam Porter, UM-IHC co-executive director, also a professor of computer science at UMD, agreed.

“These visits and tech demonstrations are a great way to showcase UMD and the UM-IHC as high-level resources and value-add for any international businesses considering making Maryland their U.S. home,” he said.

https://ihc.umd.edu/international-delegation-of-selectusa-business-leaders-visits-umd/

A Virtual Feast for the Eyes and Ears

May 13, 2026

—Story by Jennifer Holland, College of Computer, Mathematical, and Natural Sciences communications group

Ruohan Gao didn’t set out to study sound. He began his work in computer vision, drawn to what he calls the most “obvious” way humans understand the world: by sight.

“Vision is so fundamental for many creatures, certainly for humans,” he said. “Think of how much vision has shaped the evolution of living things!”

But he knew that perspective was incomplete.

“Of course, when we interact with the world, we also listen, we also touch, we also feel,” he said.

When Gao joined the University of Maryland in 2025 as an assistant professor of computer science, that broader thinking pushed his research toward the multisensory dimensions of artificial intelligence (AI) and what he calls Multisensory Machine Intelligence—an approach that reflects people’s true multi-layered experience.

“We want to mimic—and maybe enhance—how humans hear, see and feel the world, by building intelligent machines that have those capacities in a multisensory virtual space,” said Gao, who has an affiliate appointment in the University of Maryland Institute for Advanced Computer Studies (UMIACS).

Filling the silence

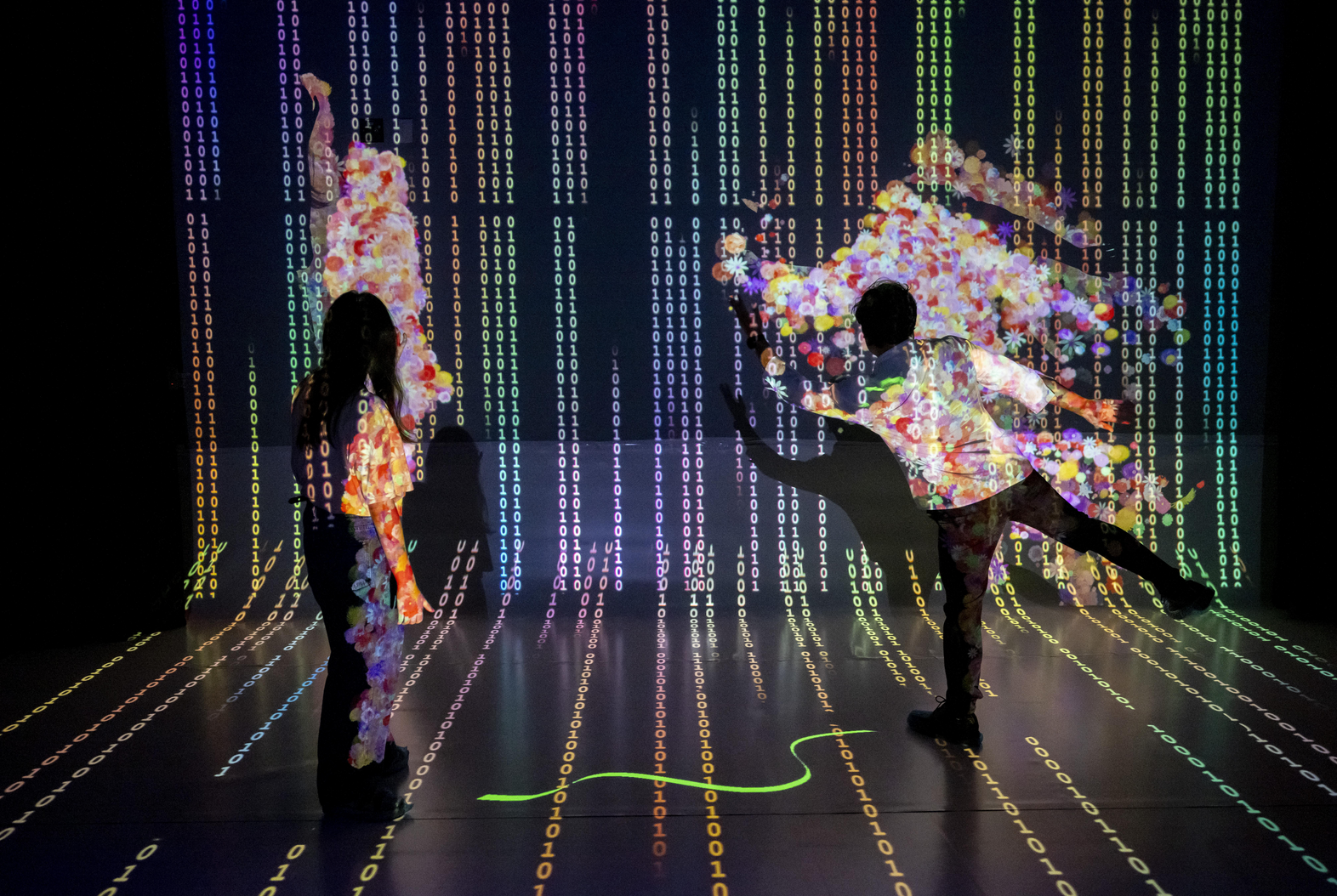

That’s where SonoWorld enters the picture.

SonoWorld is an explorable virtual landscape that fills a crucial gap in the virtual experience. While recent advances have made it possible to generate immersive 3D environments from single images, “all these frameworks have one missing ingredient,” he said. “They are silent.”

But sound is inseparable from how most of us experience the world.

“We are living in 3D, and we don’t just see what’s around us—we hear it,” he said. “We hear things we can’t see, too. I can hear you talking to me, but I can also hear birds chirping outside. I can hear the hum of the air conditioner in the building. Sound is everywhere.”

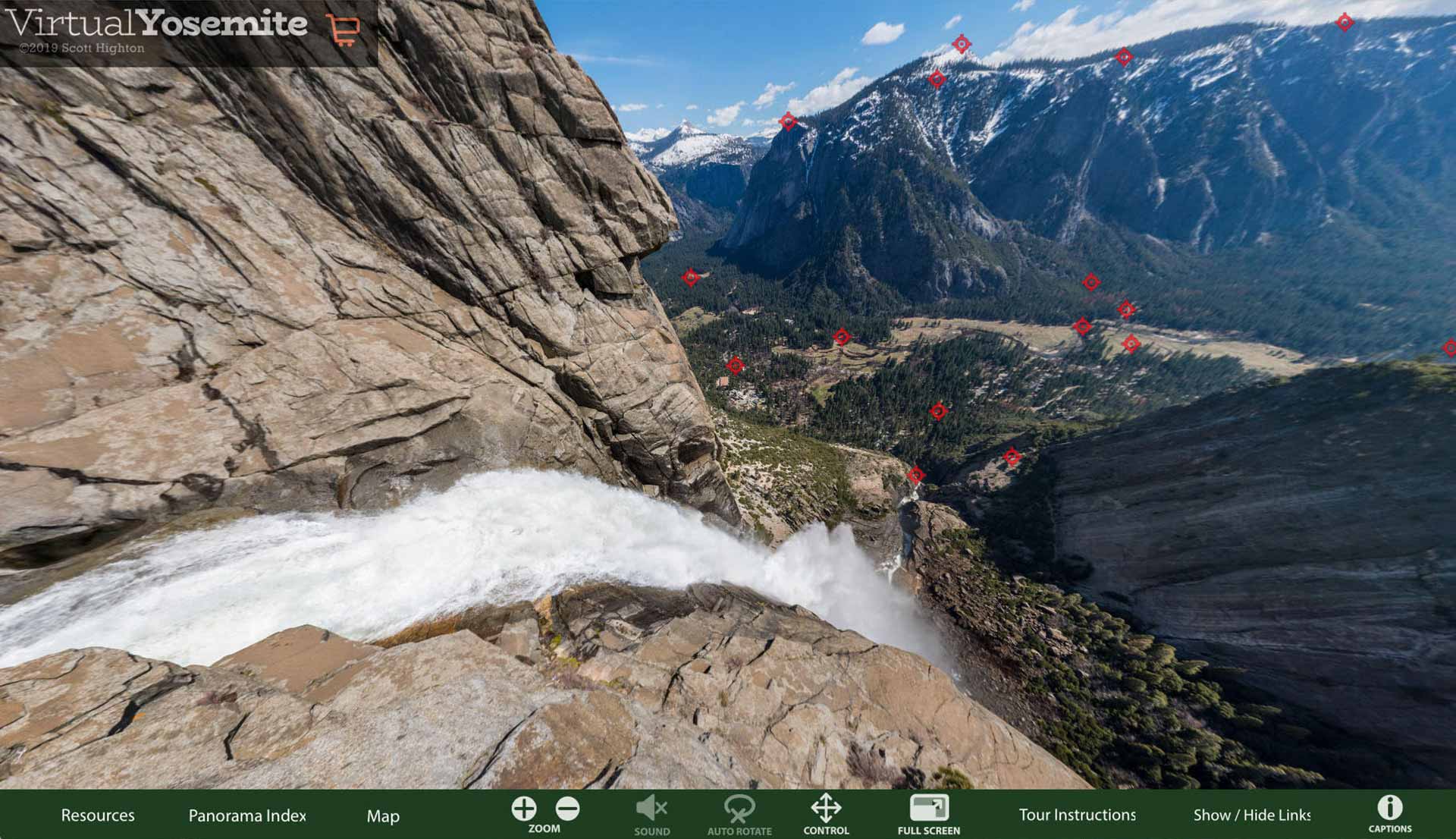

SonoWorld turns a single image into a fully immersive 3D audiovisual scene, allowing the user to “walk” deep into the image and hear what’s going on throughout.

“Our goal was to add a spatial sound field that is aligned with the visual content,” Gao explained, which means sound doesn’t just exist in the scene—it behaves like it would in real life.

“If there is a violin on your left, you should hear it from the left,” he said. “If you walk toward a waterfall, the sound should get louder.”

Achieving such complexity requires the system to do more than recognize objects. It has to infer the noises they make and the way those sounds change in different contexts.

“As a computational task, that’s very challenging,” he said.

But teamwork is powerful, noted Gao’s collaborator, Distinguished University Professor of Computer Science Ming Lin, who brought her decades of experience and expertise in computer animation, geometric modeling and virtual reality to help improve both the perceptual and physical realism of the final framework.

Also on the team, UMD computer science Ph.D. students Derong Jin and Xiyi Chen, who played key roles in developing the method and co-authored a paper highlighting SonoWorld with faculty members Gao and Lin. Their paper will be presented at the IEEE/Computer Vision Foundation Conference on Computer Vision and Pattern Recognition in June.

Sound stages

The process unfolds in several stages. First, the system expands the original image into a 360-degree view.

“We generate a 360 panorama to capture the whole scene,” Gao explained.

Next, the system uses AI foundation models to identify and map the locations of objects within that space—things like trees, rivers and buildings. The SonoWorld system then predicts which of those objects produce sound and what they should sound like.

“There are different types of sound sources,” Gao said. “Birds are a type of point source. A river is more like an area source. And then there are ambient sounds, like insects or wind. Each type requires different treatment in our program.”

Finally, the system generates spatial audio that matches the 3D environment.

“The sounds have to be attached to plausible locations in the 3D world,” he said, so that as a user moves through the scene, both the visuals and the audio shift naturally together.

The result, which the team demonstrated at Maryland Day 2026, is what Gao calls “an environment that can be explored visually and acoustically at the same time. SonoWorld is a world with built-in sensory layers, like the real thing.”

A more seamless (virtual) world

While the technology is still emerging, Gao sees immediate potential in a variety of entertainment experiences.

“For content creation, this is very useful,” he said. “Right now, you often have separate pipelines—one for visuals, one for sound. In the future, you could generate both together from a single image,” which could reshape workflows in filmmaking, gaming and digital media, making it easier and more efficient to create immersive experiences.

Gao also sees broader possibilities, like virtual and augmented reality, where sound plays a critical role in making environments feel real. Lin noted that the system may also be applicable to design.

“If an auditorium isn’t designed correctly for audio quality, a musical or other experience will be very different,” she explained. “For a designer to use this technology to make design choices is very powerful.”

Gao also sees long-term applications in robotics, where multisensory data could help machines better understand and interact with their surroundings, for example, in fine-grained, dexterous manipulation of objects.

Beyond any single application, Gao sees SonoWorld as part of a larger shift in AI.

“There’s a concept called spatial intelligence,” he said, referring to the ability of machines to understand the 3D world. “But I believe it should be multisensory spatial intelligence.”

In other words, a true understanding of the world can’t come from vision alone.

Layers to come

“There is still a lot to do,” Gao noted.

Current virtual environments are largely static, and one of the next challenges is adding motion—moving from 3D to what he calls “4D,” where both objects and sounds change over time.

“Life certainly doesn’t sit still,” he said. “Cars pass by, people move, sounds change.”

Plus, life is tactile, and touch is another sense the team hopes to program in. The team is also working on reconstructing and exploring worlds inside videos.

“It’s a big step because video includes multiple perspectives and a temporal component not present in a single image,” Lin said. “But we expect to be able to capture dynamic, moving objects and changing scenes very soon.”

The collaborative effort that led to SonoWorld’s release reflects the broader nature of the challenge itself. Multisensory intelligence sits at the intersection of multiple fields, and Gao believes the future of AI will depend on bringing those areas together.

“We should have a unified way to represent and understand this multisensory world,” he said, “instead of treating each modality separately.”

Because in the end, the goal is not just to build better models, but to build virtual systems that experience life in all its vibrance, using all the senses—just like we do in the real world.

https://www.umiacs.umd.edu/news-events/news/virtual-feast-eyes-and-ears

Studying Haptics to Reshape How People Learn Motor Skills

May 1, 2026

Story adapted from the Department of Computer Science

From learning to play an instrument to performing a surgical procedure, mastering physical skills often depends on repetition and correcting mistakes over time. University of Maryland (UMD) researchers in human-computer interaction are examining whether haptic technology can reshape that process by physically nudging users earlier in motor execution, rather than relying solely on post-error correction.

Kyungyeon Lee, a computer science Ph.D. student at UMD, is contributing to that research by exploring how hand exoskeleton systems can support motor sequence learning through preemptive feedback.

Lee developed a hand exoskeleton that shows how real-time error sensing can be paired with physical intervention. The system uses electromagnetic actuators and magnetic field sensors to detect and physically prevent users from making errors before they occur, with the entire sequence happening within 150 milliseconds.

In experimental studies, Lee found that preemptive feedback influenced how users approached learning, with participants showing increased confidence, greater awareness of potential errors, and improved learning performance compared with traditional feedback methods.

Although the study evaluated participants in a simple rhythm game task, the same concepts and training systems could be carried over to more high-stakes settings.

“In high-stakes training environments—like for pilots, surgeons, or air-traffic controllers—errors can be costly or dangerous, and systems that intervene before a mistake is made could change how people are trained for these roles,” Lee says.

Her paper outlining the project received the Best Paper Honorable Mention Award at the Association for Computing Machinery Conference on Human Factors (ACM CHI 2026) in Barcelona, Spain, ranking among the top 5% of 6,740 submissions.

“Having this work accepted at ACM CHI with an Honorable Mention is very meaningful,” she says. “Our work takes a different strategy from the mainstream that skill learning relies solely on guidance, so it was especially rewarding to see this approach recognized.”

Lee is advised by Jun Nishida, an assistant professor of computer science with an appointment in the University of Maryland Institute for Advanced Computer Studies who co-authored the award-winning paper.

“Kyungyeon is a diligent and reliable researcher who consistently brings projects to completion. It is fascinating to see that she has been developing engineering skills from scratch while articulating a coherent research vision,” says Nishida.

Looking ahead, Lee said her future work will continue to examine how physical skills are learned.

“Learning new skills is inherently challenging but exciting,” she explains. “I would like to establish new ways to make this process not only effective, but also more empowering by enhancing users’ sense of agency and competence through personalization. I hope that haptic technologies can augment our physical capabilities, embodied knowledge, and the quality of our lives, as AI continues to advance."

https://www.umiacs.umd.edu/news-events/news/studying-haptics-reshape-how-people-learn-motor-skills

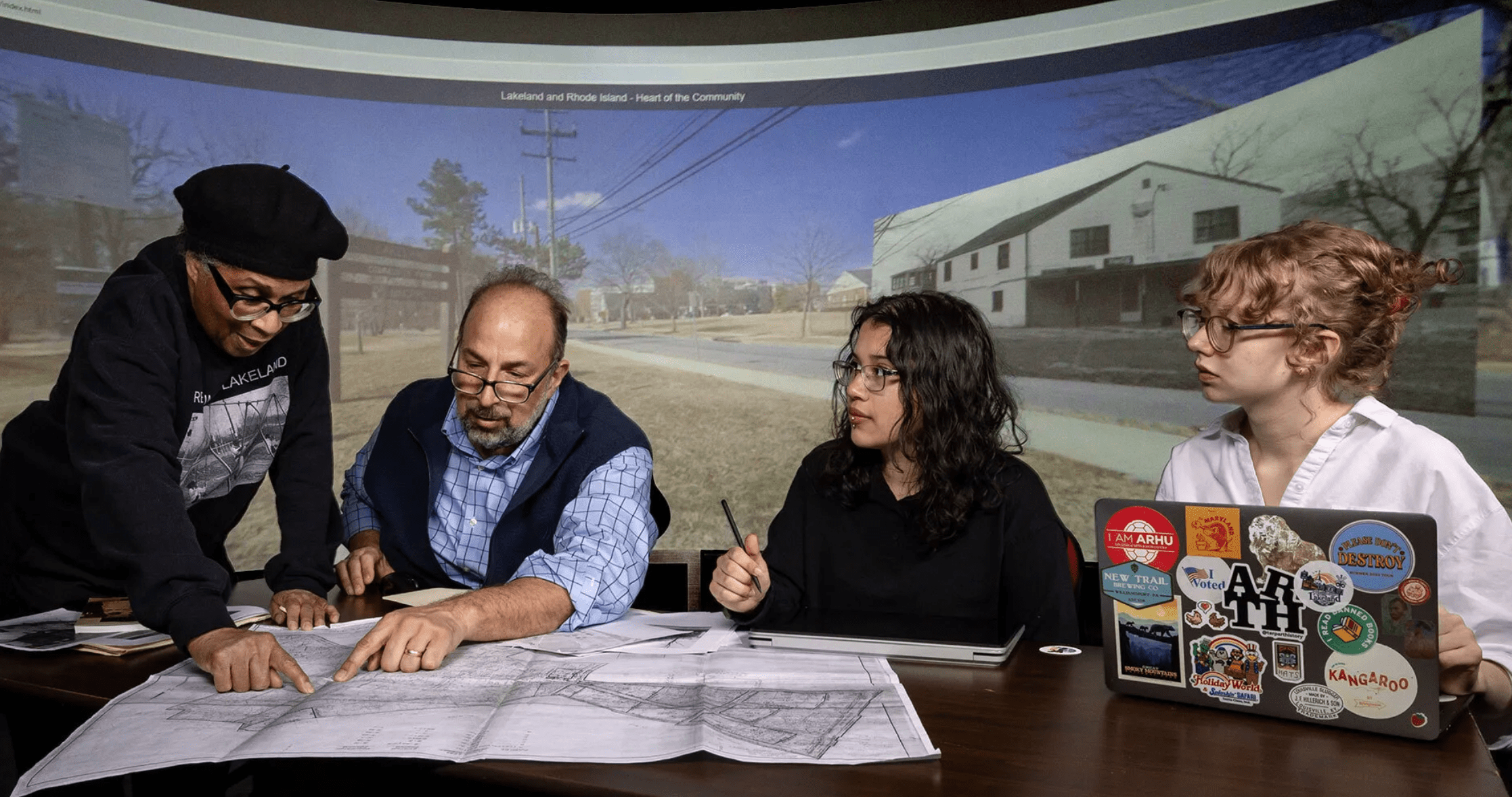

Digital Revival of a Displaced Community

New Exhibit Tells Story of Nearby Lakeland With Help From UMD Students and Faculty

May 12, 2026

At the virtual intersection of Lakeland Road and Rhode Island Avenue, a tableau that laces together past and present unfolds. Next to a solar-powered smart device telling riders when the next bus is coming sits the former Mack’s Market, a mid-century general store with an ice cream counter and a billiard parlor.

It’s one of the interactive digital streetscapes featured in a new exhibition at the College Park Aviation Museum that create a portrait of Lakeland, a vibrant Black neighborhood near Lake Artemesia that existed for 75 years before being mostly bulldozed.

“This is going to allow Lakelanders ... to see something they haven’t seen for many years, and show their children and grandchildren,” says Quint Gregory, director of UMD’s Michelle Smith Collaboratory for Visual Culture, who assisted in developing “Reclaiming Our Space: The Story of Lakeland,” an effort led by residents and students.

Lakeland originated in the 1890s as a resort-style suburban community, designed to offer wealthy families more space and privacy out of the city. Within a decade, one of economic disruption, African American residents began to move in, as white people moved out.

Lakeland became a place where people picked apples from the trees in one another’s yards and had neighbors who felt like “cousins but you’re not quite sure how they’re your cousins,” says Maxine Gross ’81, chair of the Lakeland Community Heritage Project (LCHP).

Flooding had plagued part of Lakeland from its beginnings, and in the 1960s, community members asked for flood mitigation help from the local government. The result was the 1970 Lakeland Urban Renewal Plan, which had consequences they had fought against: the displacement of 104 out of 150 families to make way for Lake Artemesia Park, townhomes and apartment buildings.

“For that whole period of time, the community was in some degree of upheaval, uncertainty, living on the edge,” says Gross. “I’ve been living a lot with the voices of people who really fought hard and were truly disillusioned.” (The College Park Community Center stands on the site of Gross’ family’s former home.)

Using LCHP’s digital archive, graduate students in Gregory’s class on collaborative curation worked with Lakelanders to identify themes around which to shape the exhibition: business and entrepreneurship, connection to nature, religion, education and family. Research for the exhibition was partly funded by a grant from the Maryland 250 Commission, recognizing Marylanders’ contributions to U.S. history during its milestone anniversary.

American studies Ph.D. candidate Scherly Virgill, along with graduate students Dramane Batiano, Hannah Brancato, John Hunter and Jamie Myre, helped develop the exhibition in Gregory’s class, and currently works at the College Park Aviation Museum. “We want to make sure that those who still live in Lakeland and future generations can see Lakeland as an enduring place of hope and joy,” she says.

https://today.umd.edu/digital-revival-of-a-displaced-community

Connecting Nature and Technology Through Creative Design

May 8, 2026

Senior immersive media design major Holden Denyer connects digital experiences with the physical world.

At the University of Maryland’s annual spring open house called Maryland Day, senior immersive media design major Holden Denyer shared with visitors Odonata Odyssey, an educational video game he created that guides players through the full life cycle of a dragonfly from its point of view with just a few clicks.

Denyer handcrafted an arcade cabinet to house the game and custom-built physical buttons to curate a tangible learning experience for players at the event. Odonata Odyssey teaches players that dragonflies spend most of their lives underwater as aquatic predators before emerging as the familiar flying insects people recognize.

“Interacting with the game physically allowed facts and lessons to implant more thoroughly into their thought processes,” Denyer explained. “It wasn’t just a straightforward lecture or a brief show-and-tell. People were able to put themselves in a different perspective and learn in a very different yet still effective way.”

The game’s success last year at Maryland Day made it unsurprising that Entomology Professor Bill Lamp’s team brought it back in 2026 for visitors to the Plant Sciences Building. Denyer also created a web browser version of the game to increase accessibility for those who couldn’t make it to campus or who wanted to play the game again on their own.

“This project is an example of what I think technology should create; the game doesn’t replace real-world experiences but deepens them instead,” Denyer said. “That’s my goal, blending logical thinking and tech with artistic expression and creativity. UMD’s IMD program helped me develop the skills needed to do just that and now it feels like home.”

Investigating insects with interactive gaming

Denyer’s path to creating Odonata Odyssey started in a biological sciences classroom. He first became interested in entomology after taking an elective course, BSCI 145: “The Insect Apocalypse: Real or Imagined?” with Lamp.

Fascinated by what he learned, Denyer pursued a summer research internship with the U.S. Department of Agriculture through the Resilience Climate Adaptation Program (RCAP), which was affiliated with the Lamp lab. There, he assisted graduate student researcher Robert Salerno (M.S. ’25, entomology) in studying the crucial role arthropods play in maintaining agricultural health. Denyer personally designed the 3D model for the bait lamina strips—strips inserted into soil to measure the feeding activity of soil invertebrates—used in their experiments. He also oversaw the production of several hundred units 3D-printed on campus, watching his design go from a digital file to physical tools used in real scientific research. Denyer’s bait lamina strips were used to closely monitor soil health in Maryland agricultural fields, gauging the effects of pesticides, fertilizer and land use (crop monocultures, soil tillage and aeration techniques, etc.) on beneficial insects hiding in the ground.

“I worked closely with farmers, helping them know about more efficient agricultural processes involving how they work with their soil that could be economically beneficial to them as well,” Denyer explained. “Translating the data and numbers into practice, helping them and the environment, showed me that I could make a difference using both science and creative thinking.”

That intersection of science and communication led Denyer back to Lamp’s lab to develop Odonata Odyssey and other ways to bring the wonders of insect biology to the public. He continues to assist with bait lamina testing, helping to communicate complex data to farmers and other stakeholders with his expertise.

Following his graduation in May, Denyer plans to work in museum and interactive exhibit design at organizations and production firms where education is front and center. For Denyer, Odonata Odyssey’s continued success is proof of concept for the career he wants to build. He hopes to create more digital tools that draw people closer to the natural world.

“There’s an important place in between interacting with the world around us and a digital screen,” Denyer said. “That spot in between those two things is like a golden zone—that's the goal I have in my mind.”

https://cmns.umd.edu/news-events/news/holden-denyer-connecting-nature-and-technology-through-creative-design

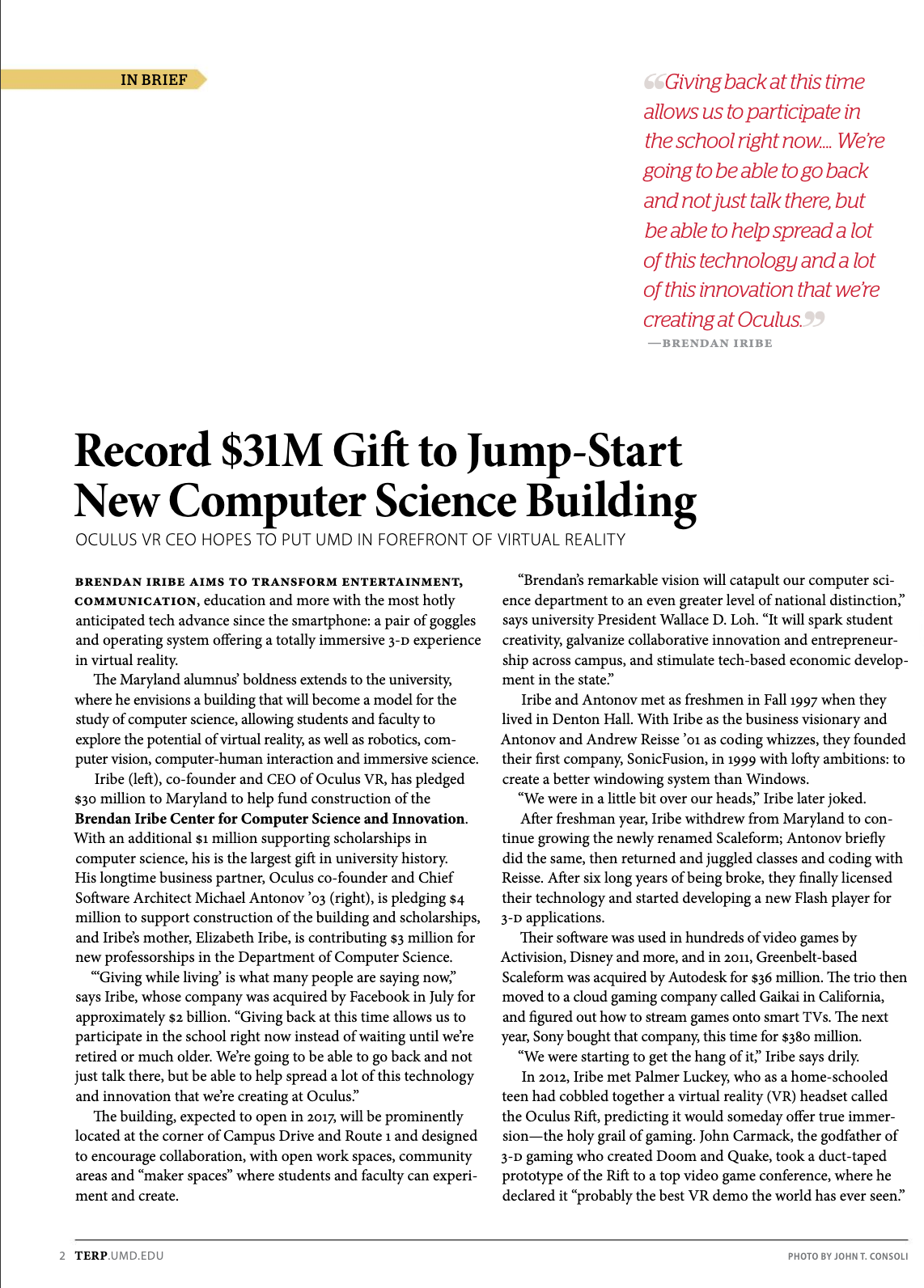

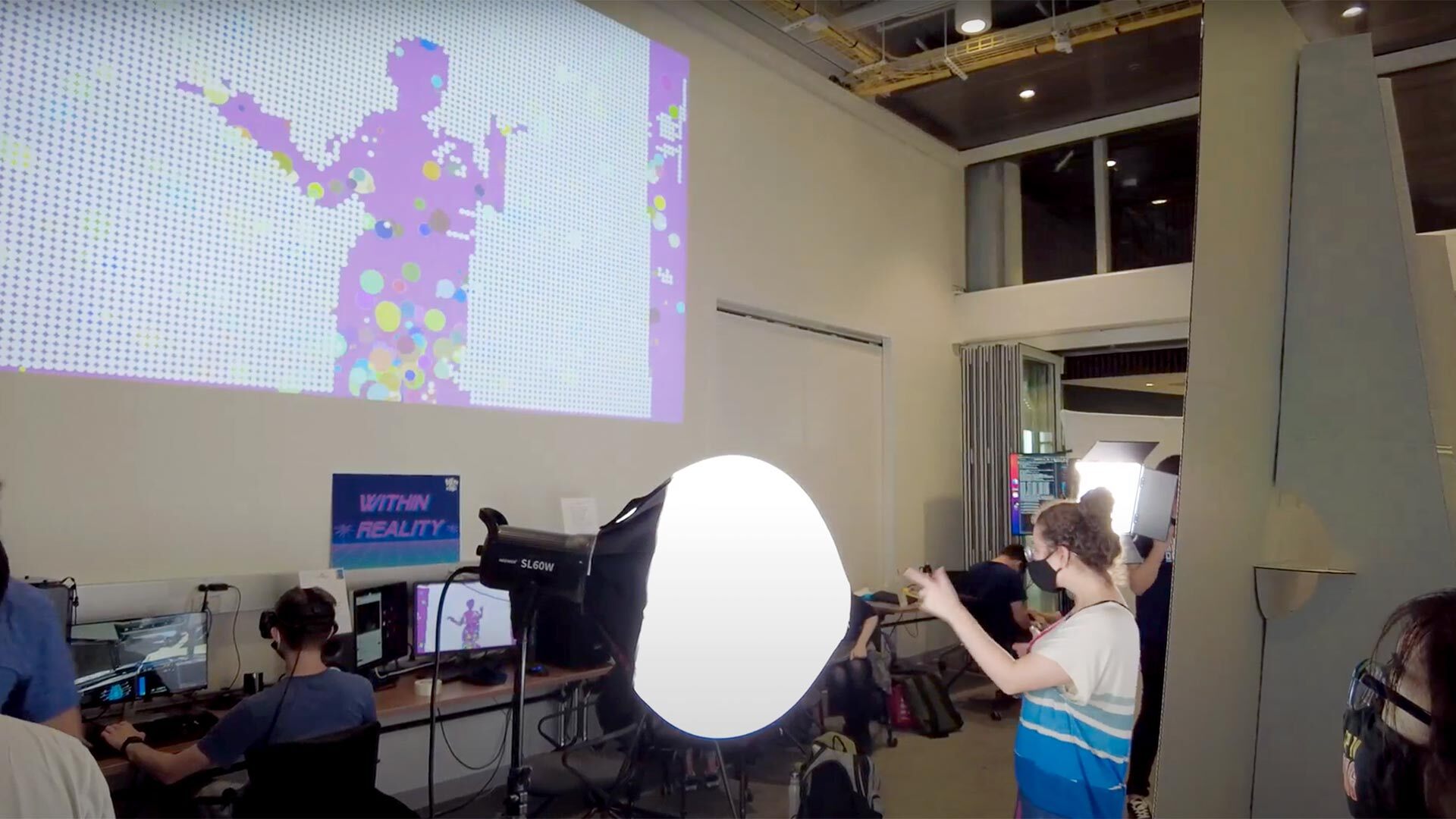

From March 27-29th in the Do Good Accelerator, students from UMD and Johns Hopkins University came together for Social Impact BuildFest, a weekend-long event where curiosity and collaboration took center stage. Teams comprising of students from all backgrounds, coders and non-coders alike, worked side by side, guided by technical mentors and social impact experts, to prototype augmented reality experiences designed to tackle real-world challenges. Check out some of our favorite moments from the weekend.

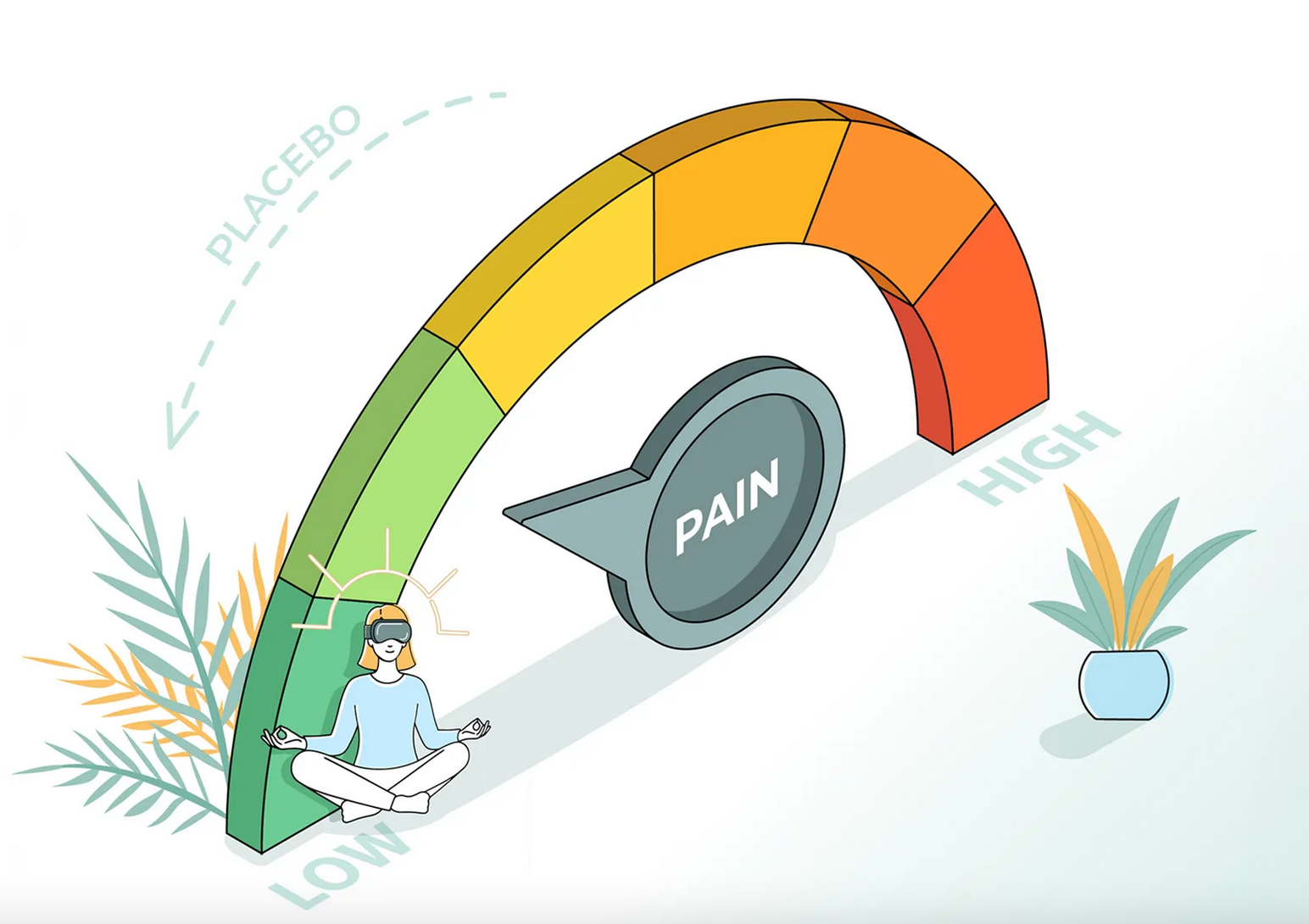

UMD-led Study Supports Role for VR in Pain Relief

Observing Others, Even Digital Avatars, Affects How We Feel Pain Ourselves

February 23, 2026

By Jennifer S. Holland M.S. ’98

If you sit on a tack, it’s likely to hurt. But pain is more than a physical experience; it’s a phenomenon affected by our emotions, past experiences and the people around us.

Apparently, pain relief is similarly complex.

A new study published in Nature’s journal npj Digital Medicine reveals that watching someone else experience pain relief, whether it’s another human or a digital representation of one, can meaningfully reduce the hurt we feel ourselves. But context matters: Who we are watching and how they are presented influence the strength of this placebo effect.

For this study, a team led by researchers from the University of Maryland, College Park and the University of Maryland, Baltimore, including co-authors from the University of Maryland Institute for Health Computing (UM-IHC), investigated how observational vicarious learning—learning by watching others’ therapeutic outcome—can induce firsthand placebo analgesia, a phenomenon in which pain eases without an active treatment.

Participant responses revealed that the placebo effect varied depending on whether the demonstrator receiving a treatment was a human or a cartoon-like avatar, and whether the individual was presented in immersive virtual reality (VR) or standard video. The team’s findings suggest that both avatars and immersive technology can play a valuable role in mediating pain relief through the placebo effect.

“This study provides new insight into how learning from others—across different forms and formats—can shape how we experience pain reduction ourselves,” said Luana Colloca, an MPower Professor of Pain and Translational Symptom Science in UMB’s School of Nursing and director of the Placebo Beyond Opinions Center. The project is part of the MPowering the State Initiative, which leverages the complimentary research prowess of UMCP and UMB for the good of all Marylanders. “What surprised us most was that immersive virtual environments and even avatars could amplify placebo analgesia. This opens new possibilities for integrating digital tools into pain management strategies.”

In the National Institutes of Health-funded study, 47 participants watched videos of a human demonstrator receiving painful heat stimulation. Study participants continued to watch as two creams, one blue and one green, were applied to the affected area, and the subject appeared to feel relief from one of them—even though the creams were identical and neither had analgesic properties.

Later, participants received the same heat stimulation and the same two creams. Even without an explicit promise of improvement, participants reported less pain when using the cream they had seen “work” on the demonstrator, providing evidence that pain relief can be socially learned without overt suggestion.

With the immersive reality expertise of UMCP Computer Science Professor Amitabh Varshney, dean of the College of Computer, Mathematical, and Natural Sciences, the researchers also investigated whether study participants’ experiences differed depending on the technology—using VR goggles and surround sound versus standard video on a screen—and whether the demonstrator was an actual human or a digital avatar.

When participants used immersive VR, avatars were significantly more effective at eliciting pain relief in study participants than human actors; however, human actors produced stronger effects using a normal screen. For any clinical application, the work underscores the importance of tailoring digital interventions to the delivery format.

Colloca noted that VR’s ability to “tap into social learning mechanisms” makes it powerful, and that the tool is already being used in clinical settings, from rehab to mental health to medical training. Watching how people engage with virtual systems and understanding the factors that affect their experiences can inform an array of next-generation digital health care tools for pain management and beyond, she said.

Varshney, who has a joint appointment in the University of Maryland Institute for Advanced Computer Studies, agreed the technology merits further study as a component of pain relief and other therapies.

“This research shows how VR avatars can inspire empathy and produce powerful placebo effects for pain management in immersive environments. It underscores the vast potential of immersive digital therapeutics to transform health care,” he said.

In addition to Varshney, UMCP authors of the study included Barbara Brawn, director of strategic initiatives for UM-IHC and managing director of the Center for Medical Innovations in Extended Reality (MIXR); and Jonathan Heagerty and Sida Li, researchers for the Mixed/Augmented/Virtual/Reality Community (MAVRIC) initiative, both of whom hold UM-IHC appointments.

https://today.umd.edu/umd-led-study-supports-role-for-vr-in-pain-relief

Virtual Game, Real Learning

VR Reading Adventure Improves Skills for Dyslexic Youth

January 15, 2026

DYSLEXIA ISN’T JUST FRUSTRATING for children struggling to decipher squiggles as letters. At schools that can’t support students one-on-one, it can be costly for parents who turn to private reading tutors.

A new virtual reality game led by Terps offers an alternative, helping kids to learn letters, build words, and connect sight and sound as they race through obstacles in a fantasy world—while still in their classrooms.

IRIS Reads, co-developed by education Associate Professor Donald J. Bolger, linguistics Professor Juan Uriagereka and physics Professor Drew Baden and helmed by CEO Anne-Laurence Nemorin ’20, has students ages 8-13 travel to Antarctica, the Great Wall of China and the pyramids of Giza to chase time bandits who have stolen historic objects. Through each level, students might “grab” different letters out of the air to create words or “slice and dice” words into different syllables (à la Fruit Ninja) to gain confidence in reading.

Using a VR headset removes distractions, especially welcome for dyslexic kids who also struggle with attention and auditory or visual processing, says Bolger, who investigates the neurocognitive underpinnings of language development and reading. “Your whole body is engaged,” he says. “These are fundamental skills, but it’s also really fun.”

The game has been tested in schools around the D.C. metro area that specialize in dyslexia. Students have demonstrated a 13-22% increase in standard reading scores after playing regularly in class. The team plans to launch it this summer for home and classroom use.

https://terp.umd.edu/virtual-game-real-learning

Seeing is Believing

Landscape Architecture Terps Introduce Virtual Reality Futures for a State Park Threatened by Sea-Level Rise

Momentum Magazine Winter/Spring 2026

by Graham Binder

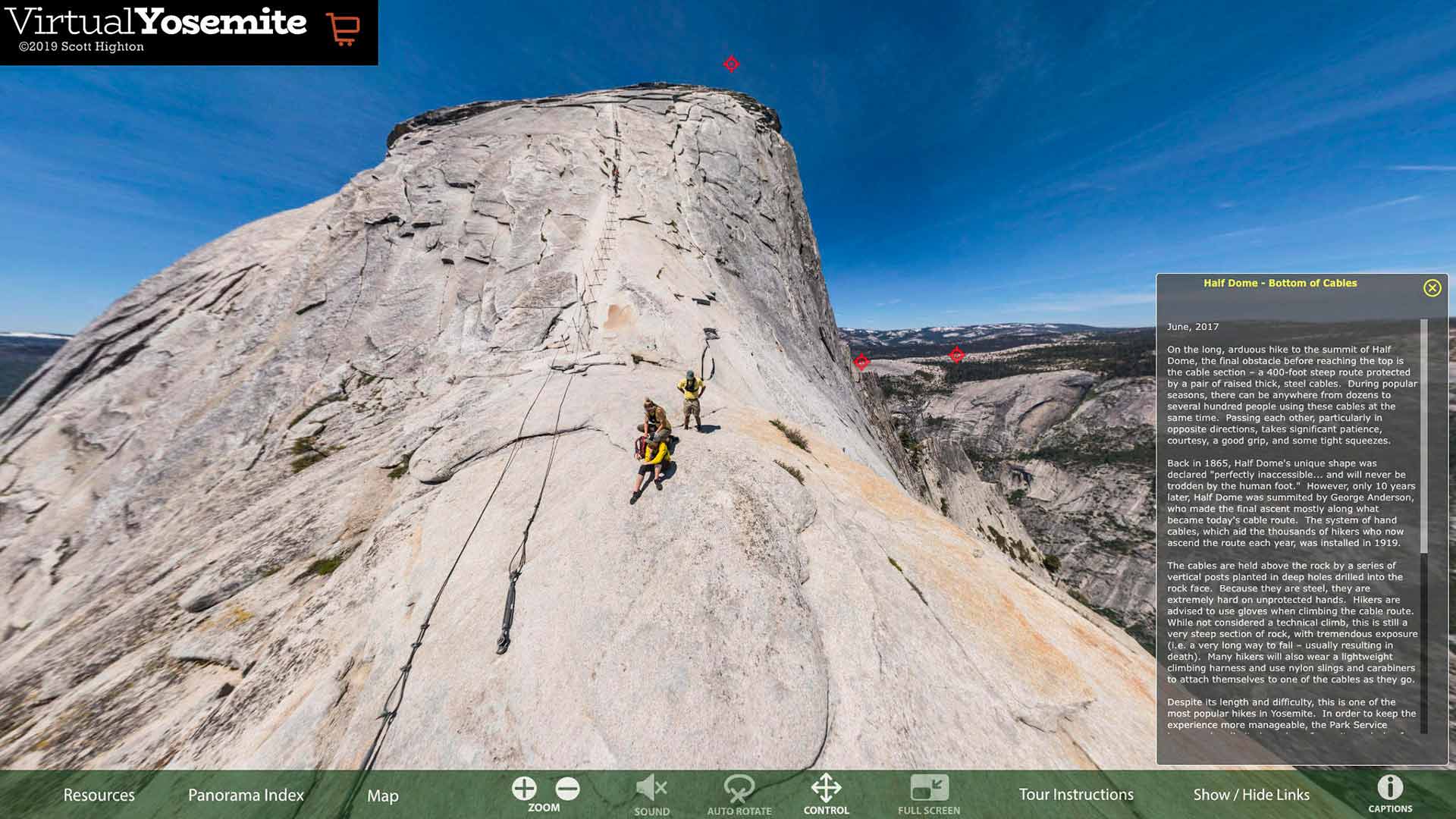

Beautiful Point Lookout State Park on the southernmost tip of Maryland’s Western shore may soon be underwater, but if that feels too distant to the campers, fishermen, and swimmers who visit every year, maybe a virtual tour of the place 10, 20, or 30 years from now will help them better grasp the future. That’s what students in AGNR’s Landscape Architecture (LARC) studio program were shooting for when they created a series of 360-degree 3D visualizations depicting the problem, as well as remedial infrastructure upgrades to help the park fight back against changing climate conditions.

The LARC team, including Nicolaas (Nico) Drummond, and Laura Crocker, in partnership with their professor Chris Ellis and Maryland’s Department of Natural Resources (DNR), was directly addressing sea-level rise, an unfortunate consequence of the world’s changing climate. It is already an issue that has plagued coastal homes and communities on Maryland’s Eastern Shore, turning once lush ecosystems into ghost forests, and thriving farms into decrepit remains of their former glory.

Strap on their VR headset and you’ll find yourself on a walkway in a park. All around you’ll see trees, a road, a marsh, a nd a vast coastline against the sky. Then comes the toll of a bell—which is meant to represent a ten-year jump forward—and suddenly the water encroaches up to the edges of marsh grass. Another bell tolls, and the water has hit the roadway. A final bell, and the water has now risen above your feet, and everything around you is inundated. But don’t dwell on the bad news because up next are the fixes, with VR depicted designs of floating wetlands, boardwalks, and raised viewing platforms, healthy living (stabilized) shorelines, migration of marshes to higher ground, and more.

nd a vast coastline against the sky. Then comes the toll of a bell—which is meant to represent a ten-year jump forward—and suddenly the water encroaches up to the edges of marsh grass. Another bell tolls, and the water has hit the roadway. A final bell, and the water has now risen above your feet, and everything around you is inundated. But don’t dwell on the bad news because up next are the fixes, with VR depicted designs of floating wetlands, boardwalks, and raised viewing platforms, healthy living (stabilized) shorelines, migration of marshes to higher ground, and more.

“More so than sharing the design concepts, we wanted to convey to the park rangers that this environment is going to change,” Drummond said. “The conditions that they know now are not going to be the same in the future.”

“As landscape architects, we are helping people see what the future would look like,” Crocker said. “Don’t give up on these sites, as there are so many things we can do! There are still ways to make them ecological, habitable, and sustainable.”

With the conceptual designs now established under the guidance of DNR, the team hopes to see these projects move forward, working towards cost estimates for the build in pursuit of the ultimate goal, to see these upgrades realized to ensure the park thrives for decades into the future.

https://agnr.umd.edu/momentum-magazine/winterspring-2026/seeing-believing/

2025

Using Virtual Reality, Students Help Visualize Climate Change Solutions at Point Lookout State Park

October 7, 2025

By Joe Zimmermann, science writer for the Maryland Department of Natural Resources

University of Maryland projects highlight adaptive management to sea-level rise and other changes

You’re on a walkway in a park. You can see trees, a road, a marsh and a coastline against a vibrant blue sky all around you.

Then, you hear the toll of a bell. The marsh expands, the water edges up the grass. Another bell and the water creeps up to the base of the roadway. Eventually, when you look down, it’s under your feet, the raised walkway that once snaked through greenery is now surrounded by water.

Each sound of the bell represents 10 years passing, allowing viewers to see the effects of climate change and rising sea levels in a virtual space all around them. What you’re seeing is part of a series of projects by landscape architecture students at the University of Maryland, College Park to use virtual reality to visualize climate change at Point Lookout State Park, as well as possible adaptations to shifting conditions.

“When you see the water come under you, and hear the bird sounds turn to wave sounds, I think it helps people understand [climate change] in a different way,” said Nico Drummond, a landscape architecture major who was part of the team that designed the project that used the tolling bell.

The work began when Maryland Department of Natural Resources staff approached the university’s Partnership for Action Learning in Sustainability program about opportunities to highlight the effects of climate change in the state.

Chris Ellis, a landscape architecture professor, said the class wanted to look at a particular state park and landed on Point Lookout State Park as a fitting site. Located at the southernmost tip of St. Mary’s County at the confluence of the Potomac River and the Chesapeake Bay, Point Lookout is susceptible to sea-level rise and other effects of a changing environment. Sea levels could rise by 1.5 to 2.5 feet at the park over the next 25 to 50 years.

The class split into groups, with each one looking at a different area of the park and how it could change in the coming decades.

“The students were looking at the park from a large scale—what are the changes going to be in terms of sea level rise,” Ellis said. “We started thinking, ‘What are the problems associated with that? And I’ll tell you, as we went through the semester, it was more like ‘What are the opportunities that we can take advantage of? Because as the change happens, there are actually some really interesting things that may come from that.”

The student groups looked at a range of solutions which both protected the park land while also offering new opportunities for recreation. Raised walkways crossed areas where wetland buffers allowed for marsh migration, and kayak trails pass by living shorelines and floating wetlands. Boardwalks contain educational panels about the helical piers that are adjustable for rising waters and oyster reefs in Lake Conoy serve as living breakwaters to protect the marsh.

The projects were focused on keeping the park adaptive and accessible to people, even under changing conditions.

“We were excited to host this virtual reality visioning project at Point Lookout,” said Ranger Jonas Williams, director of planning for the Maryland Park Service. “The students did a phenomenal job illustrating how the park may change in the future, giving park visitors a chance to see what climate change could mean for this unique and vital landscape. Projects and partnerships like this help the Park Service engage the public in understanding risks and opportunities, while guiding planning and adaptation efforts not only at Point Lookout State Park but across other at-risk parks in the years ahead.”

The projects will be viewable online on Meta Quest TV, which streams virtual reality content.

As part of the planning for the project, which took place over the spring semester, students visited Point Lookout and got to see the areas they spent the semester designing projects for. Eashana Subramanian, a landscape architecture major with a minor in sustainability studies, said she had been to the park as a child with her parents and appreciated the chance to come back and put forward ideas about the park’s future.

“It was really meaningful that I got to work on this place that I’ve visited too,” she said.

https://news.maryland.gov/dnr/2025/10/07/using-virtual-reality-students-help-visualize-climate-change-solutions-at-point-lookout-state-park/

The Effects of Warm Versus Cool Color Palettes Within Virtual Reality

Research article

First published online September 3, 2025

Ciara A. Fabian, Susannah B. F. Paletz, and Jason AstonView all authors and affiliations

Abstract

Color theory plays a crucial role in design, technology, and art. This study examined how the color groups, warm and cool, affect individuals’ emotional and physiological states as measured using the Positive and Negative Affect Schedule and heart rate monitoring. Twenty participants viewed their assigned color simulation for 10 min within a virtual reality (VR) headset. We found a significant decrease in heart rate before, during, and after VR exposure. The interaction between time and the color condition was significant, such that the decrease in heart rate was steeper for those in the cool conditions. There were no statistically significant differences in self-reported positive affect; however, there was a significant decrease in self-reported Negative Affect for both the Warm and Cool groups after the VR exposure. Understanding how colors affect users through the outcomes of this research can help designers and developers make more informed decisions in the domains of UI/UX and front-end application development.

Introduction

Color theory is essential to designing interfaces (Kimmons, 2020). Whether for products or interior design, designers start by choosing a color palette. Given the growing use of Virtual Reality (VR; Thunström et al., 2022), we tested the effects of warm versus cool colors in VR on affect and physiological outcomes. This study replicates and extends prior research by addressing current gaps in the understanding of the effects of color groups within immersive headsets.

Background

Color theory and the effects of color have been studied in multiple domains (e.g., Art Therapy, Withrow, 2004). Designers use color theory to guide users’ attention (e.g., Fialkowski & Schofield, 2024). Colors can also establish a particular ambiance, such as in horror video games, where darker palettes are frequently used to create an ominous effect (Steinhaeusser et al., 2022). In environmental psychology, researchers have found that cool colors evoke feelings of calmness compared to warm colors, which increase physiological arousal (Yildirim et al., 2011).

These findings are similar to those within UI/UX design and video game development. Cha et al. (2020) evaluated the effects of color on heart rate and emotions by presenting the colors white, green, red, and blue to participants wearing an Oculus Rift VR headset for 2 min. They found that blue and green were rated more relaxing than red, which was more arousing and unpleasant (Cha et al., 2020). However, the participants’ heart rates decreased for each color, although red had the lowest decrease of all the colors (Cha et al., 2020). Our study extends these findings to examine the effects of two broad color groups, warm and cool, on heart rate and emotions. Based on prior research on color theory’s potential psychological and physiological effects (AL-Ayash et al., 2016), we hypothesized that warm colors would increase heart rates and negative affect and decrease positive affect. We expected cool colors to decrease heart rate and negative affect while increasing positive affect.

Methods

Twenty participants were recruited from a Mid-Atlantic university and its surrounding areas. Participants were between the ages of 18 and 34 and self-reported no history of color blindness or Epilepsy. Forty-five percent were men and 55% were women. Each participant was randomly assigned to a color condition, warm or cool, with 10 participants in each condition. The five warm colors were red (HEX #f60b0e), red-orange (HEX #ee1a25), orange (HEX #f04524), yellow-orange (HEX #ffb900), and yellow (HEX #fff100). The five cool colors were violet (HEX #432885), blue-violet (HEX #091b5d), blue (HEX #0051a2), blue-green (HEX #0d98ba), and green (HEX #04724c). There was a total of 10 colors, five shown for each respective color group. The colors yellow-green and red-violet were omitted as they are crossovers of warm and cool colors. The colors were shown using an Oculus Quest 2 VR headset.

As with prior research (e.g., Liew et al., 2022), we used the Positive and Negative Affect Schedule (PANAS) to measure emotions (Watson et al., 1988). Participants filled out the PANAS-GEN scale (Watson et al., 1988), which includes 20 emotions (e.g., Distressed, Inspired, see below) on a 1 to 5 scale (1 = very slightly or not at all, 2 = a little, 3 = moderately, 4 = quite a bit, and 5 = extremely). We collected PANAS data before and after the heart rate monitor was worn, which was also before and after the VR color exposure. We collected the participants’ heart rates using an OxyU Bluetooth monitor on their left wrists. The device continuously monitored the participant’s heart rate before, during, and after the VR exposure.

During the VR color exposure, the participants wore sound-canceling Peltor headphones to limit noise distractions. Participants were also asked to remain seated and not move excessively or remove the headset unless they wanted to end testing early, as any excess motion could result in unusable data from the heart rate monitor. While participants were immersed in the Oculus Quest 2, they viewed a VR room that changed colors depending on their assigned color condition for 10 min total (as in other color studies, Litscher et al., 2013). If the participant was assigned to the Cool condition, they were shown the color variations of blue, violet, and green. If the participant was assigned to the warm condition, they were shown color variations of red, yellow, and orange. The VR color exposure was also screen-cast to an experimenter’s laptop to monitor the point of view and ensure no technical problems could affect the manipulation.

After the VR color exposure, participants’ noise-canceling headphones and Oculus Quest 2 were removed. Heart rate was monitored for an additional 2 min to get a final reading and test for any changes after the 10-min VR color exposure. After removing the OxyU monitor, participants filled out the PANAS again. To analyze the changes in heart rate for each participant, the average was calculated for the conditions before, during, and after the VR color exposure. To aggregate the participant’s PANAS results, the 20 emotions were split into two groups. Positive Affect was the sum of Interested, Excited, Strong, Enthusiastic, Proud, Alert, Inspired, Determined, Attentive, and Active, and Negative Affect was the sum of Distressed, Upset, Guilty, Scared, Hostile, Irritable, Ashamed, Nervous, Jittery, and Afraid (Watson et al., 1988). The Positive and Negative PANAS subscales could range from 10 to 50. Repeated Measures ANOVAs were used to test the effects of time and the two color conditions on heart rate and the two PANAS subscales.

Outcome

There was a significant difference in the average participant heart rate before, during, and after being in the VR color exposure (p = .004), such that heart rate decreased (Table 1, Figure 1, error bars are means of standard errors). On average, participants in the cool color condition started with a higher heart rate, but their heart rates overall were not significantly higher than those in the warm condition (Table 1). However, the interaction between time and the color condition was significant, such that the decrease in heart rate was steeper for those in the cool condition (p = .002, Table 1). Post-hoc pairwise comparisons with a Bonferroni adjustment suggested this interaction was driven by differences between Before and After, t(18) = 5.22, p < .001, and between the During and After within the Cool conditions, t(18) = 3.48, p = .04. There were no significant differences in Positive Affect (Table 1). However, there was a significant decrease in self-reported Negative Affect for both the Warm and Cool groups after the VR color exposure (p < .001; Table 1 and Figure 2).

Conclusion

Given the importance of color in design and the increasing attention to VR, we examined the effects of warm versus cool colors in VR on heart rate and affect. Our findings partially support our hypotheses: specifically, exposure to cool colors in VR may decrease heart rate and Negative Affect. However, we found no effects for either color group on Positive Affect, and the warm color exposure also decreased self-reported Negative Affect. These results may be because the VR color exposure, which involved sitting quietly for 10 min while watching colors, could have calmed the participants in general (e.g., lowered distress, irritability, nervousness). In future research, we could test different tasks during the VR color exposure. We also recommend drawing on emotion theories such as Core Affect and the Theory of Constructed Emotion to compare deactivating and activating emotions (e.g., Feldman Barrett & Russell, 1998; Russell, 2003) by using the PANAS-X (Watson & Clark, 1994). Future research could also collect a larger sample to be more robust against potential confounds of individual differences in initial heart rates across conditions (Figure 1).

These findings can be used to advance research in understanding how to create better designs in VR and in other fields. Knowing the effects of color groups can allow designers to build more meaningful products geared toward their users. As a result of this study being conducted in VR, it can allow those who build simulations to know the effect of colors and to build impactful scenes (i.e., a blue and green zen garden for a calming effect). These findings can also be applied to other domains such as Art Therapy (Withrow, 2004), where knowing how colors may be perceived can assist therapists in having a more effective understanding of why their clients may choose to use specific color palettes. Lastly, a better understanding of the effects of color could also lead to more ethical designs (e.g., avoiding colors that induce arousal/stress), as understanding how some colors impact users allows designers to limit or choose these colors in their products, creating better user experiences and outcomes.

Acknowledgments

We are grateful for helpful comments on an earlier draft from Letitia Robinson and three anonymous reviewers.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Research Improvement Grant program to the first author from the University of Maryland College of Information.

ORCID iDs

Ciara A. Fabian https://orcid.org/0009-0008-0621-5180

Susannah B. F. Paletz https://orcid.org/0000-0002-9513-2799

References

AL-Ayash A., Kane R. T., Smith D., Green-Armytage P. (2016). The influence of color on student emotion, heart rate, and performance in learning environments. Color Research and Application, 41(2), 196–205. https://doi.org/10.1002/col.21949

https://journals.sagepub.com/doi/10.1177/10711813251367738

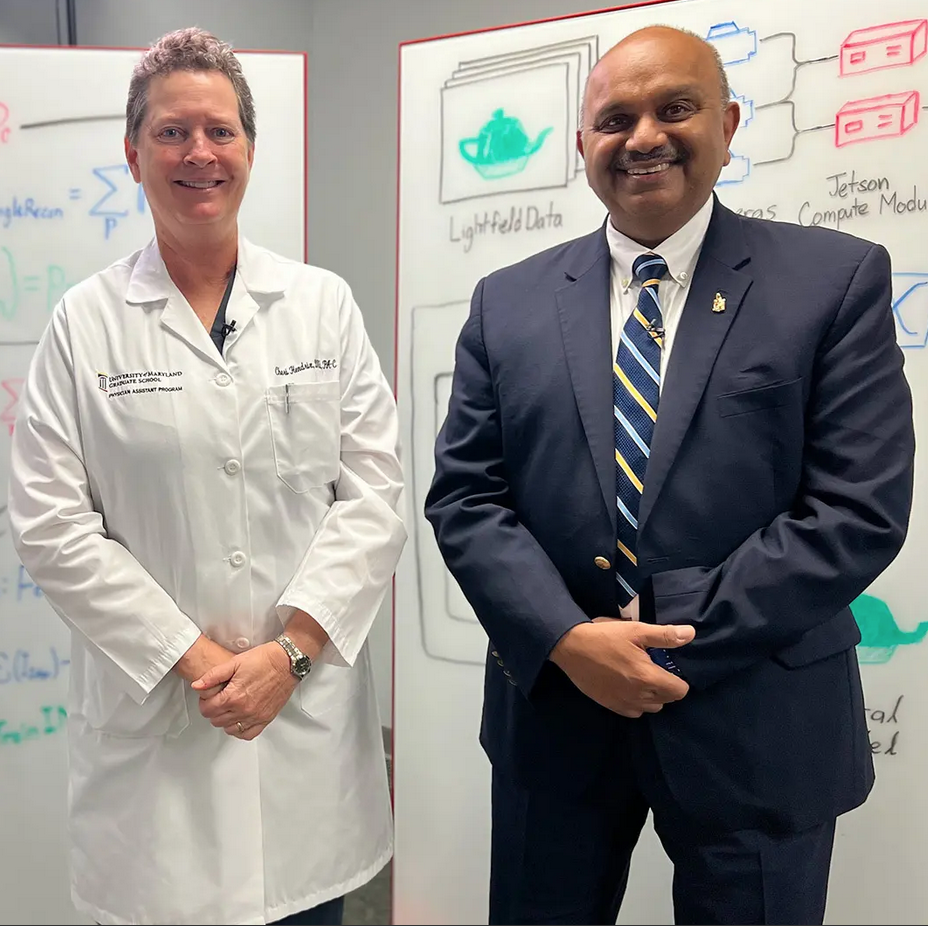

Medical Training—From Every Angle

Cutting-Edge VR Camera System Focuses on New Educational Possibilities

April 10, 2025

An advanced camera system developed at the University of Maryland, College Park is enabling futuristic teaching and learning methods for physician assistant students at the University of Maryland, Baltimore.

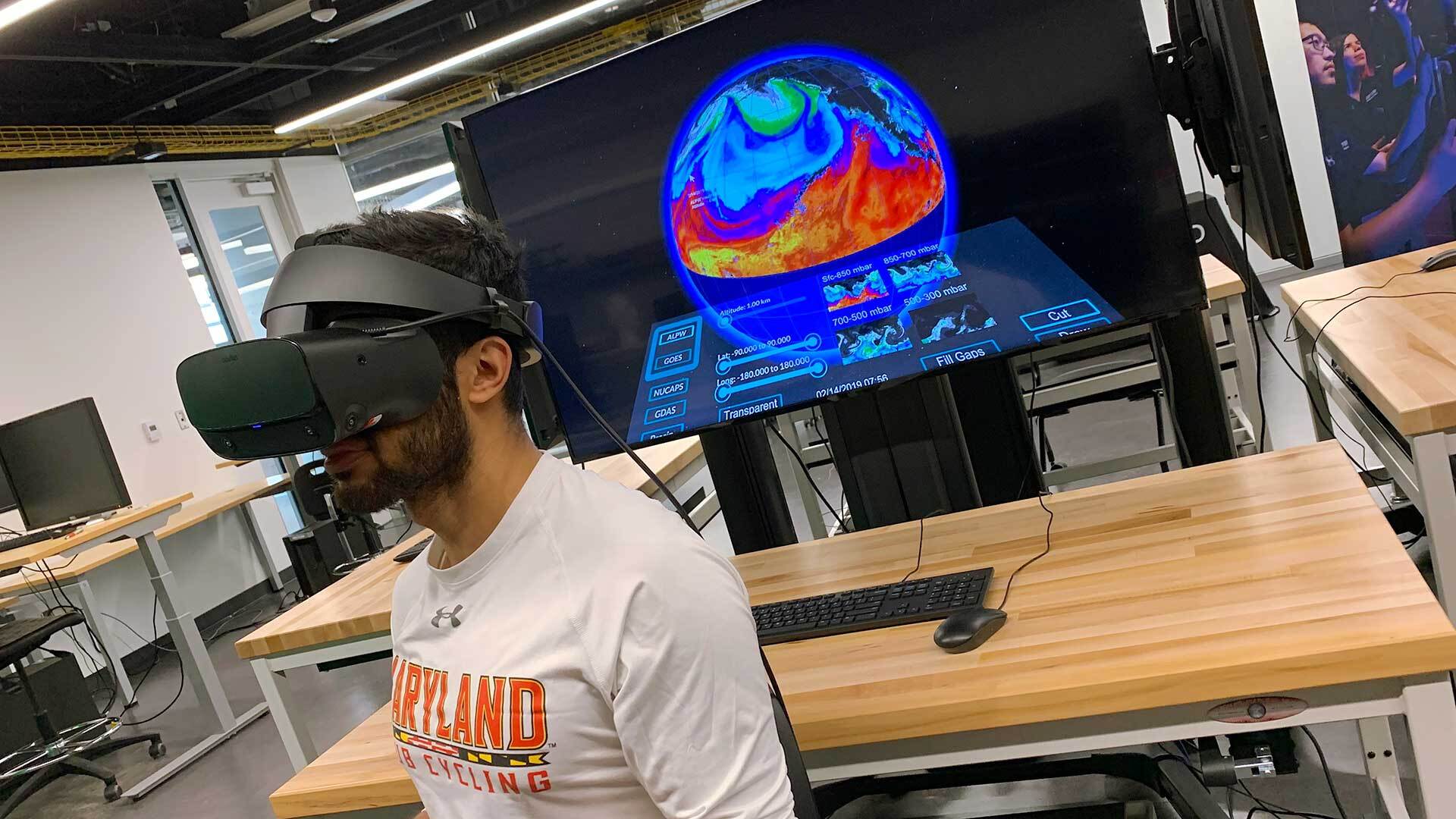

Called the HoloCamera, the device uses 300 imaging devices to create virtual reality (VR) training scenarios depicting treatment that students can “step into” and view the procedure from all angles. Funded by the National Science Foundation and led by computer science Professor Amitabh Varshney, dean of the College of Computer, Mathematical, and Natural Sciences, the project was forged through the University of Maryland Strategic Partnership: MPowering the State, a collaboration between UMCP and UMB to leverage the strengths of each institution.

Join Varshney, UMB Physician Assistant Program Director and Associate Professor Cheri Hendrix and their students taking the next step in medical training in the latest installment of “Enterprise: University of Maryland Research Stories.”

https://today.umd.edu/medical-training-from-every-angle

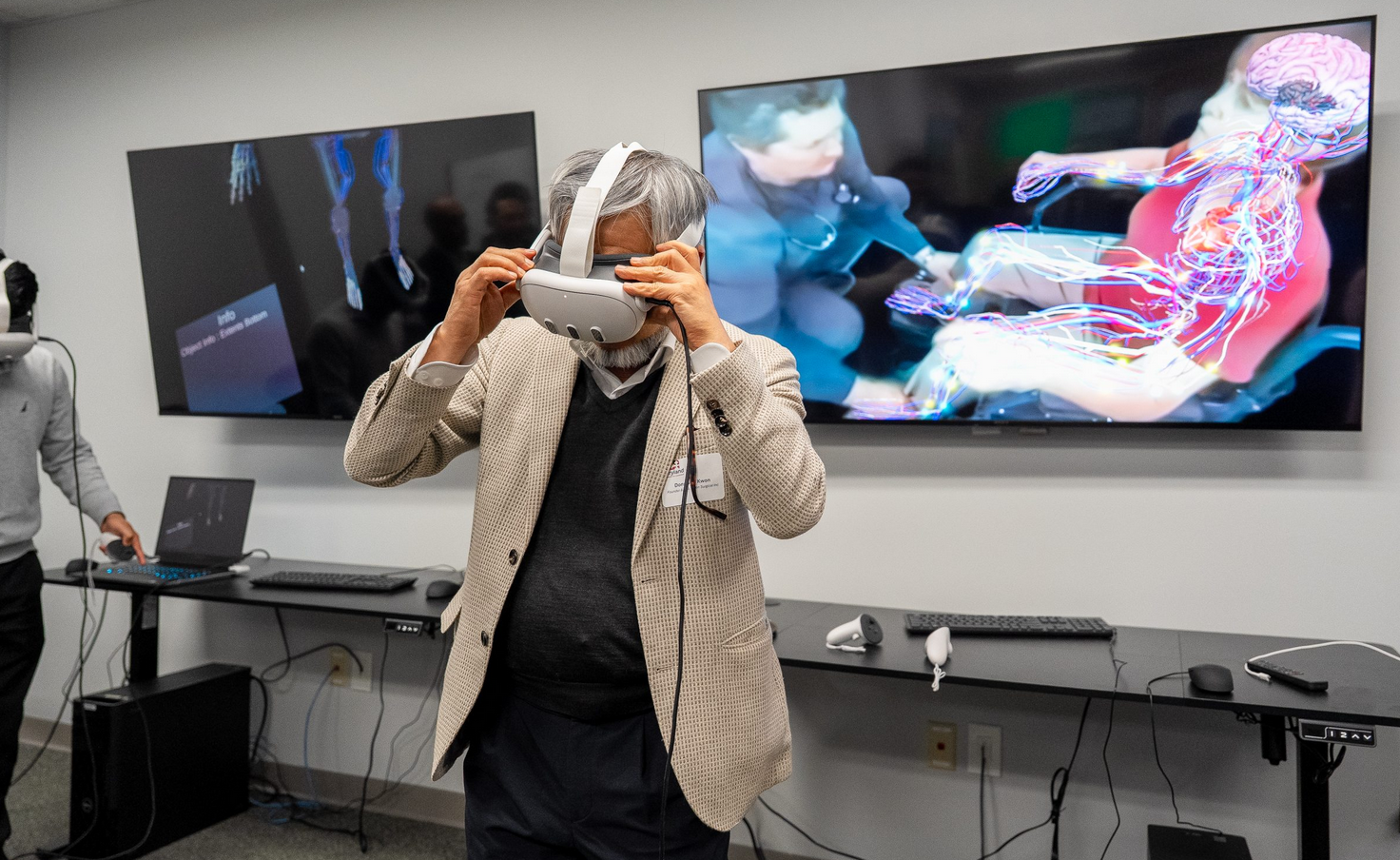

A New Generation of Doctoral Scholars Goes Beyond the Page

By Jessica Weiss ’05

April 07, 2025

From video games to VR, ARHU doctoral students are expanding dissertation scholarship through digital and experimental work.

When American studies doctoral student Christin Washington set out to explore themes of memory and spirituality, she knew a traditional, text-based dissertation wouldn’t suffice. To fully capture the lived experiences, spaces and rituals of Black women’s African-derived spiritual practices—like Obeah, Voodoo, Christianity and Rootwork—she needed to create something immersive.

Of the four chapters of her dissertation, which she will defend next year, one will be a 3D digital recreation of her grandmother’s house in Guyana, designed to be experienced in virtual or augmented reality. With additional sensory elements like sound and smell, she aims to transport her committee into the experience of Nine-Night—a Caribbean funerary tradition in which loved ones gather for nine nights to honor the deceased.

A Legacy of Innovation at UMD

While the traditional, chapter-based dissertation remains a cornerstone of humanities scholarship, a growing number of doctoral students in the College of Arts and Humanities (ARHU) are exploring new formats—video games, immersive websites, handmade objects, public humanities projects—to expand how research is created and shared. Advances in digital technology, along with increasing faculty support and a range of university resources—including specialized programs, funding, and dedicated spaces for creative and technical experimentation—are making it possible for students to integrate experimental approaches alongside traditional academic work.

“It’s sometimes hard for students to see this as a possibility since they’re still so surrounded by traditional academic culture,” said Matthew Kirschenbaum, Distinguished University Professor of English. “They don’t realize a dissertation can be something else entirely, and that this actually has a somewhat longer history than one might think.”

Kirschenbaum was an early pioneer. His 1999 dissertation at the University of Virginia, “Lines for a Virtual T[y/o]pography,” was one of the first-ever electronic humanities dissertations. After joining UMD in 2001, he helped shape MITH—the Maryland Institute for Technology in the Humanities—into a hub for experimental digital humanities (DH) scholarship. MITH, located in Hornbake Library, provides students with the technical and theoretical support needed to explore digital methods, from text analysis to data visualization.

A decade later, Kirschenbaum would begin to mentor another pioneering digital scholar at UMD: Amanda Visconti Ph.D. ’15. Through their dissertation project, “Infinite Ulysses,” a participatory digital edition of “Ulysses,” users could highlight, annotate and interact with James Joyce’s famously difficult novel, creating a collaborative reading experience. Visconti then used coding, user testing and web design as core research methods of their dissertation, rather than mere supplements to a written document. The dissertation won the Graduate School’s 2016 Distinguished Dissertation Award.

“Dr. Visconti’s work challenged the boundaries of what a humanities dissertation could be,” Kirschenbaum said. “All of the challenges involved were intellectually exciting; Dr. Visconti really ended up teaching the committee a lot along the way.”

Now director of UVA Libraries’ Scholars’ Lab, Visconti has since consulted dozens of students pursuing digital dissertation work. Visconti emphasizes the importance of being surrounded by faculty and peers, as well as courses and spaces like MITH, to support and validate non-traditional scholarship.

“Being at MITH and working with DH scholars meant I got to see what good scholarship in the field could look like,” Visconti said. “It's enormously helpful to have mentors and a community who accept your work as valid scholarship.”

Pushing the Boundaries of Scholarship

In working to recreate her grandmother’s house, Washington is collaborating with faculty across multiple disciplines—including American studies, English, women’s studies, immersive media design (IMD), geographical information sciences and historic preservation. Last summer, with funding from a 2024 Caribbean Digital Scholarship Collective grant, she traveled to Guyana with Assistant Clinical Professor Stefan Woehlke from the Historic Preservation Program to collect data and images of her grandmother’s house. Now based at AADHum and NarraSpaceXR, two campus makerspaces for digital experimentation and storytelling, she is using tools like virtual reality headsets, holographic displays and high-performance computing to create a sensory, immersive experience of the house—one that will be presented to her committee alongside traditional written chapters.

“So much of this work is about finding the right form—and sometimes, that means creating it from scratch,” she said. “My committee has been incredibly open to helping me develop a format that fully articulates the lived experience of Black women.”

Other students are also pushing the boundaries of dissertation formats. Lisa Abena Osei, a doctoral student in English, is designing an interactive game that immerses players in Afrofuturist and Africanfuturist worlds, inviting them to engage with alternative histories, technologies and narratives. (Afrofuturism broadly imagines Black futures through a diasporic lens, while Africanfuturism remains rooted in African cultures and histories.) Himadri Agarwal, another doctoral student in English, is also making a game as part of her project, using it to examine digital gaming and reparative game design practices. She will present a physical installation that people can interact with.

Not all experimental dissertations are digital. Nat McGartland, a doctoral student in English, is integrating textile art into her dissertation on visual media and data representation, crocheting data visualizations to accompany each chapter. The crocheted pieces serve to represent McGartland’s argument that human decision-making always infiltrates the collection, processing and presentation of data. Meanwhile, American studies doctoral candidate Kristy Li Puma is producing public events and digital humanities projects alongside the community members she interviews for her monograph dissertation on the history, politics and cultural practices of D.C.’s alternative and underground communities.

Preparing for the Future

Beyond redefining academic research and creative scholarship, Marisa Parham, professor of English, associate director of MITH and director of AADHum and NarraSpaceXR, said these projects are shaping the future of academic hiring, publishing and public engagement. For many students, the dissertation isn’t just a requirement—it’s a testing ground for the kind of work they may do beyond the Ph.D., whether in academia or beyond. And by working in non-traditional formats, students are developing a range of skills that go beyond traditional research and writing.

When working on digital projects, students are “working to collect hardware, navigating software licenses, even hiring people,” Parham said. “This means that working on the dissertation requires acquiring or honing management, planning and/or design skills that are clearly transferable, while also becoming a skilled researcher and thinker.”

Digital and experimental dissertations also have the potential to reach broader audiences. Unlike traditional monographs, which are typically read by a small group of scholars, these projects invite public engagement. That means students must learn how to communicate their work both to specialized academic communities and to non-experts—an essential skill for careers in and beyond academia.

For Washington, that means rethinking narratives about how Black women’s lives are understood and represented. She hopes to open up the digital elements of her dissertation to the public for viewing—allowing others to step inside it.

“My ultimate goal is for people to continue to understand the intimate and interior lives of Black women,” she said. “I’m hoping to contribute a piece of artwork and scholarship that does some of that work.”

Photos by Lisa Helfert.

https://arhu.umd.edu/news/new-generation-doctoral-scholars-goes-beyond-page

New UMD Research Brings Smarter, More Subtle Assistance to AR Glasses

UMD Ph.D. student Geonsun Lee and Google researcher Ruofei Du developed Sensible Agent, a framework that allows AR glasses to anticipate user needs using gaze and gesture cues.

October 7, 2025

Recent innovations, such as Google's Project Astra, exemplify the potential of proactive agents embedded in augmented reality (AR) glasses to offer intelligent assistance that anticipates user needs and seamlessly integrates into everyday life. These agents promise remarkable convenience, from effortlessly navigating unfamiliar transit hubs to discreetly offering timely suggestions in crowded spaces. Yet, today’s agents remain constrained by a significant limitation: they predominantly rely on explicit verbal commands from users. This requirement can be awkward or disruptive in social environments, cognitively taxing in time-sensitive scenarios, or simply impractical.

To address these challenges, we introduce Sensible Agent, published at UIST 2025, a framework designed for unobtrusive interaction with proactive AR agents. Led by Ruofei Du, Interactive Perception & Graphics Lead at Google, and Geonsun Lee, a University of Maryland Department of Computer Science Ph.D. student and Google XR Student Researcher advised by Distinguished University Professor Dinesh Manocha, the project represents a collaboration bridging cutting-edge academic research and industry innovation.

Sensible Agent builds on the team’s prior research in Human I/O and fundamentally reshapes interaction by anticipating user intentions and determining the best approach to deliver assistance. It leverages real-time multimodal context sensing, subtle gestures, gaze input, and minimal visual cues to offer unobtrusive, contextually appropriate assistance—marking a crucial step toward truly integrated, socially aware AR systems that respect user context, minimize cognitive disruption, and make proactive digital assistance practical for daily life.

Click HERE to read the full article.

https://www.cs.umd.edu/article/2025/10/new-umd-research-brings-smarter-more-subtle-assistance-ar-glasses

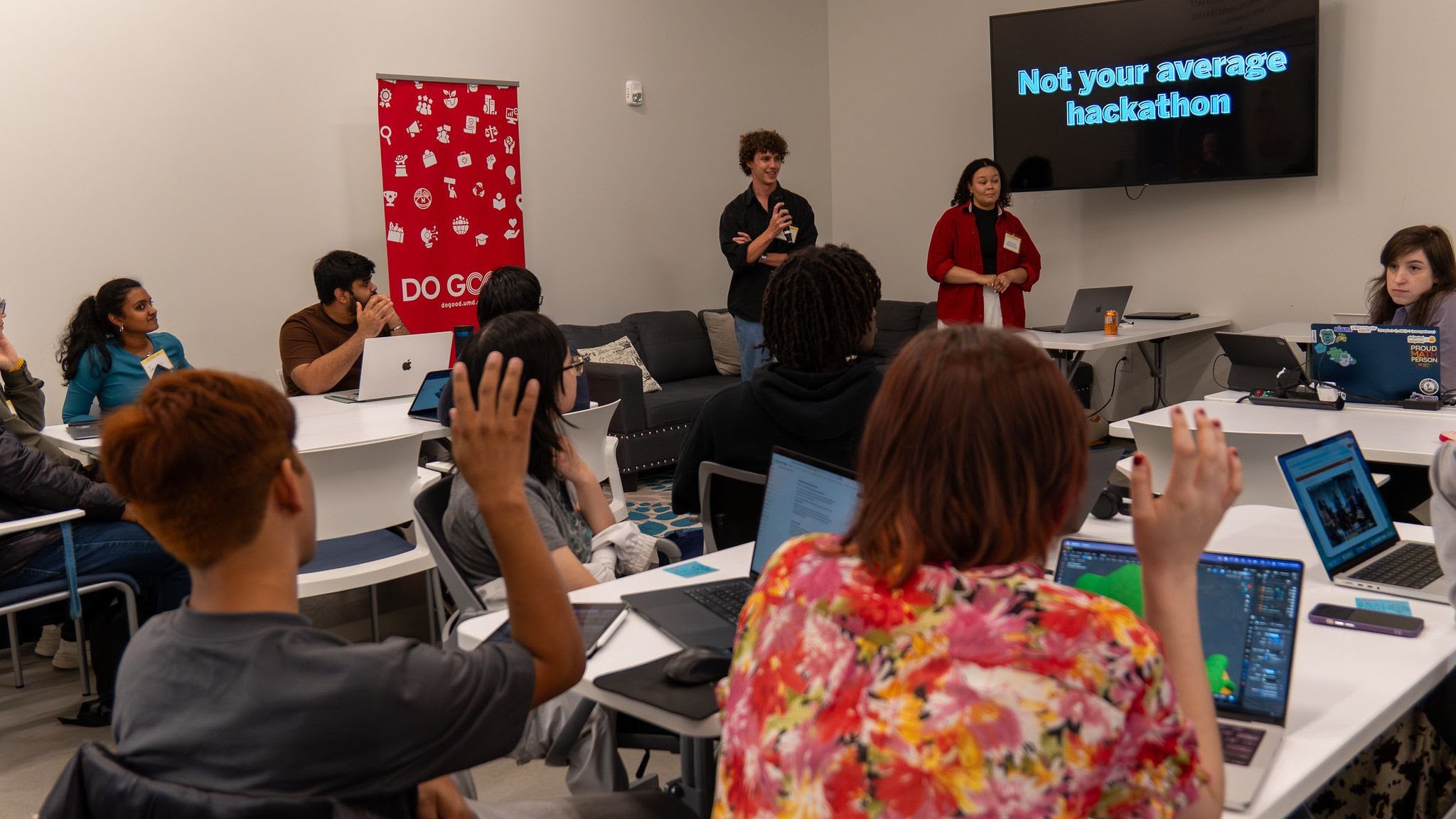

A New Reality for Physician Assistant Students

January 31, 2025 | By Alex Likowski

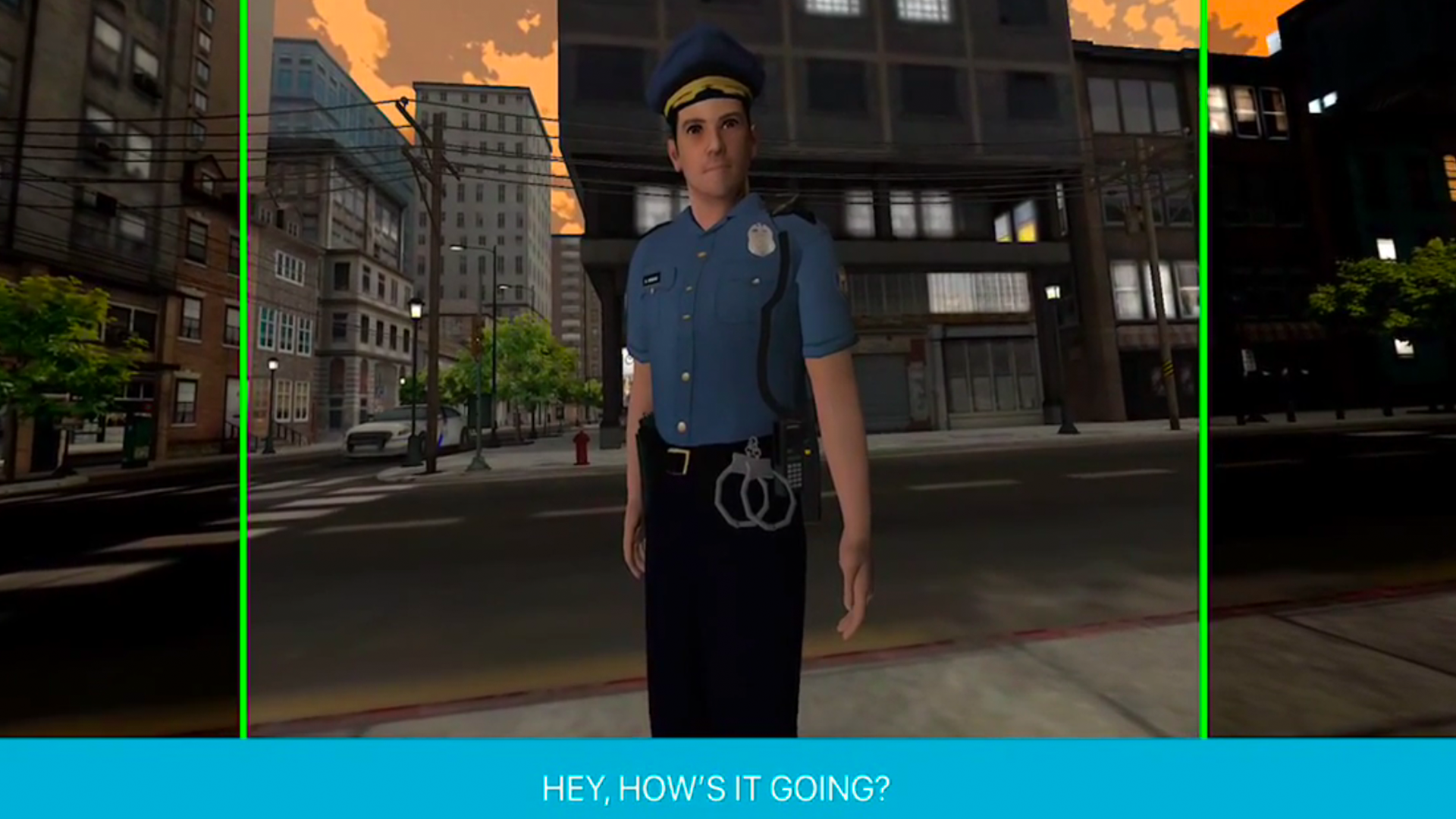

“Today is gonna be a really, really fun day for you,” Assistant Dean for Physician Assistant Education and Associate Professor Cheri Hendrix, DHEd, MSBME, PA-C, DFAAPA, told her class of 58 physician assistant (PA) students. In truth, it would be hard to say just who would have the most fun, the students who sat in anticipation of an exciting new high-tech learning experience, or Hendrix, who waited a very long time for this day to arrive.

“I think this is really going to enrich what you know about neurologic disease,” she continued, understating her expectations a bit. For the first time, this learning module made use of virtual reality (VR) technology, offering her entire didactic year cohort an immersive experience. With the use of VR headsets, the students would be virtually in the room with Hendrix as she examined a standardized patient — a professional actor posing as a patient who was presenting with signs of distress. The plan was to give the students a brief knowledge test before their VR experience, then another test afterward to see how much this experience improved their understanding.

Student Lauren Makarehchi was among the first in the group to experience the virtual exam.

“I feel like I’m actually there, even though I can’t see my hands or anything. But it’s like, I feel like I’m very immersed in the experience,” she said with her headset still on.

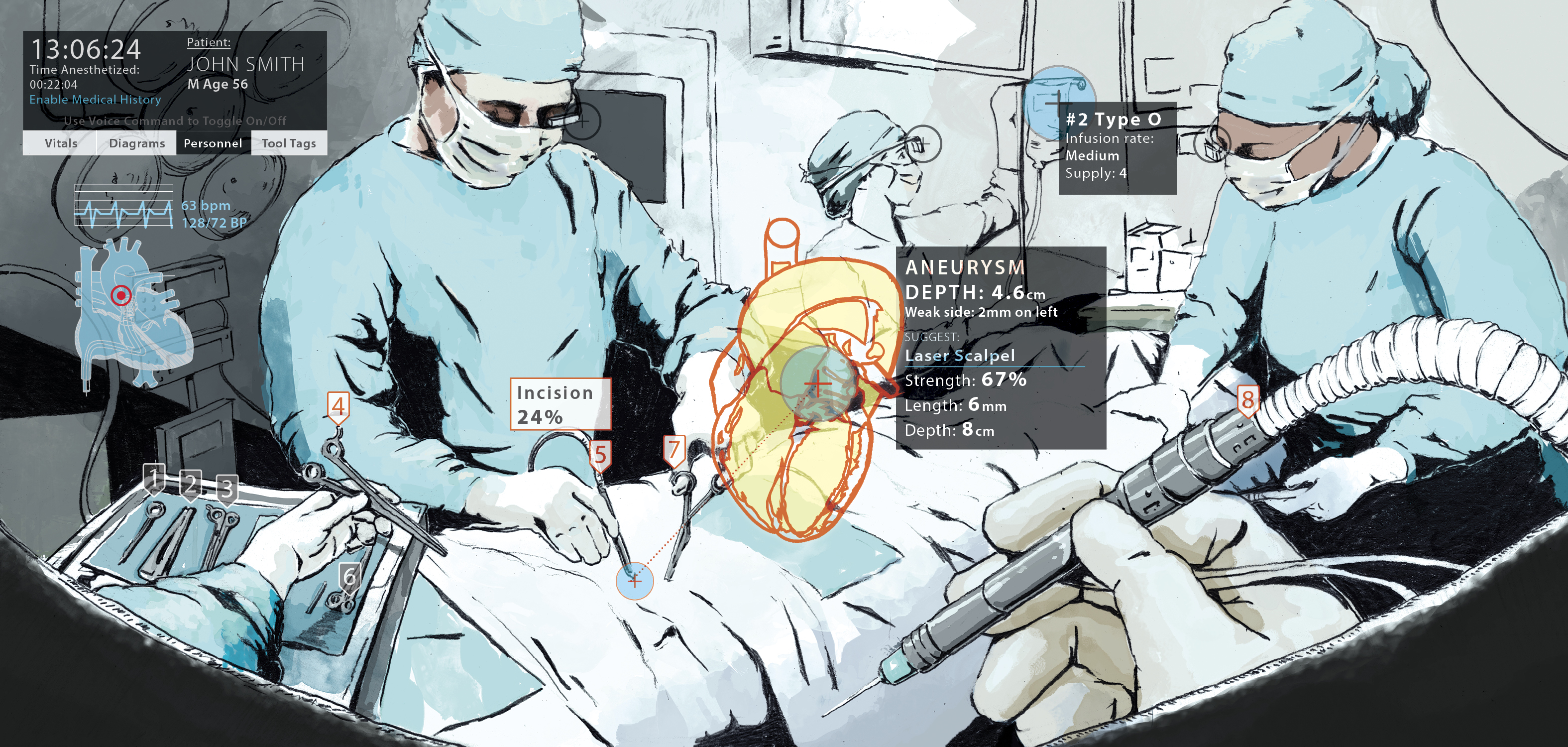

Not only could Makarehchi see the exam, but she also could move around the room and observe provider and patient from every angle. Additionally, as the patient described her experiences for Hendrix – all classic symptoms of a stroke – an animated graphical overlay allowed Makarehchi and the other students to see what was going on inside the patient’s body, including the nervous and cardiovascular systems.

“I can see that the clot started in her atrium, went up to the left side of her brain, and now you can see the impulses are being limited on the right side of her body, which is causing the motor and sensory limitations that we can see on the exam,” said student Meg Rice. After her five-minute virtual experience, Rice seemed impressed.

“It’s a total game-changer to kind of be able to be in the room with them, seeing what’s going on in real time, basically as she does the exam and asks the different questions. And you know how what’s going on inside of her body is influencing her answers and her symptoms and her signs all in real time. So, that’s really cool,” she said. “When I get to clinicals next year, if I have a patient with a similar situation, I can kind of have this picture in my brain of what might be going on.”

Every student appeared to have a similar impression, and most were quick to see the impact VR training might have on their ability to retain what they learn.

“I think this kind of experience will change the way you look at patients for sure, because now I’m able to remember seeing inside and what’s happening,” said student Simone Hill. “It’s one thing to see someone presenting physically with their complaints, but when you have experiences like this, you’re able to remember: OK, I remember seeing what this looks like from the inside. I remember seeing which part of the brain was impacted by this and where the blockages were and where the vessels were flowing through.”

Classmate Eugene Obeng-Appiah agreed. “If I see a patient who presents with certain symptoms, I can go back to that memory that I have of what I saw. And so I think that would allow me to be able to put pieces together a little bit more and faster as well.”

The ability to retain and recall a huge and wide array of information is precisely what Hendrix and her colleagues are trying to gauge.

“We have 24 months to get 3½ years of medical school in them,” Hendrix said. “How do you do that so that the understanding is there, the knowledge is there? And the application of that, you’ve got to apply these medical concepts. You can’t memorize this.”

In those 24 months, PA students must master topics such as anatomy, clinical practice, diagnostic tests, and disease over many disciplines, including family medicine, internal medicine, pediatric medicine, women’s health, psychiatric medicine, behavioral health, emergency medicine, and surgery.

To fill that very tall order, Hendrix can rely on her skilled team at the University of Maryland School of Graduate Studies (UMSGS). But to step up the impact of their training, to help students get to what she calls “a-ha!” moments faster, Hendrix wanted students in the classroom to get as close as possible to the real thing with virtual reality. For that, she called in another team, this one from the University of Maryland, College Park’s (UMCP) College of Computer, Mathematical, and Natural Sciences. Dean Amitabh Varshney, PhD, is a pioneer in the application of high-performance computing and visualization in engineering, science, and medicine.

“Oh, that’s a marriage made in heaven. Boy, Dr. Amitabh Varshney, best buddy. I love him!” Hendrix gushed. Just before Hendrix had her first chance encounter with her College Park comrade, Varshney had just completed building the largest volumetric capture studio in the world, funded by a $1 million grant from the National Science Foundation. Called HoloCamera, the studio employs AI-driven techniques to fuse and reconstruct dynamic visual data captured simultaneously from 300 cameras. Varshney’s team integrated anatomically accurate animations, precisely aligned with the dynamic hologram models, to enhance the student learning experience.

“I’ve got some ideas I want to use,” Hendrix said, recalling their conversation. “I want to see inside a patient. I want to see inside a mannequin. I want to use holographic technology to demonstrate to the students the pathophysiologic mechanism of diseases. They said, ‘Great.’ ”

Varshney’s team now works with Hendrix’s PA program under the aegis of the University of Maryland Institute for Health Computing, a collaboration supported by the University of Maryland Strategic Partnership: MPowering the State (MPower). MPower is a collaboration between the state of Maryland’s two most powerful public research engines — the University of Maryland, Baltimore (UMB) and UMCP — to strengthen and serve the state of Maryland and its citizens.

So far, only the stroke patient scenario has been produced for use in the classroom, but Hendrix is optimistic about producing more videos and introducing more technology, such as the ability to not just see and hear, but also to touch and feel.

Enter Dixie Pennington, MS, CHSE, CHSOS, simulation director for the UMSGS PA program. Her job is to think ahead of the technology but also find ways to work in the cutting edge of what’s available right now, such as haptic gloves.

“Haptic is related to a sensation where you can touch and feel and you can actually get feedback that you are touching something,” she said. “If you’re immersed into some sort of ARV [artificial reality video] or setting, you don’t know that you’re touching an avatar or something like that. But if you wore haptic gloves, you would know. You’d have that sensation, that feedback that would come back to your hand, which makes it that much more real.”

The students are thinking ahead, too. Inspired by a dermatology PA who treated her when she was 13, student Emily Lamb has her sights set on following the same path, but maybe with a boost from technology. “If there was a way to use virtual reality so where you can see different skin lesions, see the depth on different skin tones and different ages, even pediatric to geriatric, the skin is so different, it changes. It’s actually the largest organ in our body,” she said. “So, to be able to study it in a more in-depth and realistic way, it would completely change the field.”

Bottom line, Hendrix said the day exceeded her expectations. “I knew they would like it, but I didn’t know how much they would absolutely love it. And many of the students came out saying, ‘Can I just learn every condition this way?’”

https://www.umaryland.edu/news/archived-news/january-2025/a-new-reality-for-physician-assistant-students.php

Tech Talk: Virtual Reality healthcare training at University of Maryland

by: Tosin Fakile

Posted: May 7, 2025 / 04:08 PM EDT

Updated: May 7, 2025 / 04:11 PM EDT

COLLEGE PARK, Md. (DC News Now) — The University of Maryland, College Park campus is using a 3D virtual human avatar to diagnose patients in a new medical training virtual reality scenario.

“About five years ago, we had this vision to create cameras that could create 3D, virtual, avatars of humans that were cinematically real, very high fidelity,” Professor Amitabh Varshney said.

That resulted in the creation of the Holocamera; Cinematic Avatar Imaging Studio.

“Instead of a camera person, for instance, in a movie, the director directs how the user will experience a scene. We are now able to allow users to directly figure out how they would like to immerse and see, experience a particular environment,” Varshney said.

That full immersion happens at the volumetric capture facility at UMD’s College of Computer, Mathematical and Natural Sciences.

“It has 300 cameras. Each camera is capturing information at 4k resolution, 300 frames a second. So overall, we are getting 100 billion samples per second in this facility,” Varshney said.

It’s designed for high-precision learning and simulation.

Varshney said a few years ago, they carried out a study that showed users can recall things much better if they experience it in an embodied fashion in a VR environment than on a desktop.

“If you look at the practice of surgery as an example, you have situations where no more than a small handful of residents, or interns, or healthcare professionals, students can participate and see the surgery,” Varshney said. “We would be able to allow students to experience and stand in the shoes of the surgeon, add their own. Well, further, it is scalable.”

It took a few years and a few versions to get to this point, and there’s more still planned for the Holocamera.

“One of the other things that we are using this facility for is to understand how artificial intelligence can be used to generate. So, we will capture one set of actions in this facility, and can we then use generative AI to generate other actions and other modalities from this facility? So that we can try a lot of what-if scenarios, in an interactive manner,” Varshney said. “How can we use this as a tool to train the next generation of computer scientists, artificial intelligence scientists and engineers, and technologists in the underlying technology for this?”

https://www.dcnewsnow.com/tech-talk/tech-talk-virtual-reality-healthcare-training-at-university-of-maryland/

A ‘Wave’ of New Understanding About Earth’s Oceans and Atmosphere

Faculty, Students Collaborate With NASA on Interactive Installation at Kennedy Center

Apr 01, 2025

On one towering screen, monochrome clouds whoosh by as if the viewer is watching them from above. On the next screen, a range of blues and greens on a section of ocean represents the levels of carbon and chlorophyll present. On a third, a rainbow effect on the outer edges of an image of Earth shows how far various wavelengths can penetrate the planet’s surface.

These screens and the scenes they show make up “Wave: From Space to Ocean,” a project led by a team of University of Maryland faculty members and students working with NASA scientists and counterparts from the University of North Texas. Together, they’re turning data from a satellite into an interactive, digestible visual experience for the public. The project is on view at the Kennedy Center through April 13 as part of the “Earth to Space: Arts Breaking the Sky” festival.

The PACE (Plankton, Aerosol, Cloud, Ocean Ecosystem) satellite, which launched on Feb. 8, 2024, is collecting critical information about ocean health and air quality by measuring the distribution of phytoplankton—microscopic plants and algae that provide food for lots of aquatic creatures while producing much of the planet’s oxygen—and by monitoring atmospheric variables associated with air quality.

“The satellite is able to look at our globe in ways that have never been done before,” said Ian McDermott ’12, the immersive media technician for UMD’s Immersive Media Design (IMD) program and one of the leaders of the Wave project. IMD is affiliated with UMD’s Arts for All initiative, which identifies and supports creative ways to combine the university’s strengths in the arts, sciences and technology to advance social justice, build community and develop collaborative, people-centered solutions to grand challenges. Arts for All also helped fund the initial project through a Spring 2024 ArtsAMP Grant. “It’s able to take photos and absorb data that uses colors that are beyond our eyes’ capacity to see. We’re making that data engaging to the general public.”

“Wave” is a roughly 15-minute animation projected on screens 16 feet tall. Motion capture cameras track guests’ bodies and movements; by waving their hands or making other motions, visitors can reveal data about phytoplankton levels, turn the globe to see a different angle of the atmosphere’s wavelengths, or watch what a phytoplankton bloom (a rapid increase in the population of the photosynthetic plankton) looks like. They can track images of clouds, captured by an instrument called HARP2 that uses 60 cameras at one time to take images; the results show their sizes and classifications, gleaning information like where lack of rain clouds might mean drought.

The project allows guests to “become proactive researchers and discover new information, new answers and new questions,” said Myungin Lee, a lecturer in the Department of Computer Science and a leader on “Wave” with Mollye Bendell, assistant professor of art. (Sam Crawford, sound and media technologist in the School of Theatre, Dance, and Performance Studies and co-director of the Maya Brin Institute for New Performance, also worked on “Wave.”)

The installation is an example of how UMD’s faculty members are developing innovative new ways of teaching and learning. “This artwork involves programming, data processing and visualization, user interface design, 3D modeling and scientific simulation,” said Lee. “The students are getting unique opportunities to be involved in such a large-scale project.”

Andrei Davydov ’25, a student in the IMD program, worked on 3D modeling for the project, sharpening his skills in coding. “It was a fun animation challenge to have the planktons both work as a group of 200, while at the same time making them have their own individual personalities,” he said.

The Wave team members hope that those who interact with the installation will walk away with a deepened appreciation for the intricate ecosystems that inhabit our planet’s oceans and atmosphere. “I want them to be mesmerized and taken by awe by the big scale of the exhibition, and also connect with how our oceans are doing,” said Yooeun Lee ’26, an IMD student. “I hope people will appreciate nature and continue to bring more recognition to it.”

https://today.umd.edu/a-wave-of-new-understanding-about-earths-oceans-and-atmosphere

Creating Wearable Devices for Body-to-Body Communication

March 6, 2025

Jun Nishida’s Embodied Dynamics Laboratory explores the dynamics of our physical skills and interactions.

In his Embodied Dynamics Laboratory, University of Maryland Computer Science and Immersive Media Design Assistant Professor Jun Nishida creates wearable devices that allow our bodies to communicate and measure our skills and embodied knowledge.

From interactive exoskeletons that share finger dexterity skills from one person to another, to a virtual reality system that allows adults to experience the world from a 5-year-old’s perspective, Nishida’s devices aim to better understand the dynamics of our physical experiences, perceptions and interactions. The goal of Nishida’s research: to establish body communication and explore how computer technology can improve our overall wellbeing.

Watch Wearable Devices for Body-to-Body Communication on YouTube

https://cmns.umd.edu/news-events/news/jun-nishida-embodied-dynamics-laboratory-wearable-technology

Varjo Case Study: Engineering the Future of Flight: University of Maryland’s Flight Simulation and Control Lab Drives Breakthrough VR/XR Research

At UMD’s Extended Reality Flight Simulation and Control Lab, tomorrow’s flight training breakthroughs are being developed today. The department is building next-generation technologies aimed at improving aviation safety, pilot performance, and training efficiency.

The Department of Aerospace Engineering at the University of Maryland (UMD) is pioneering the future of aviation through breakthrough research. At the heart of these innovations is the Extended Reality Flight Simulation and Control Lab, directed by Assistant Professor Umberto Saetti.

The lab specializes in advanced virtual, augmented, and extended reality simulations (VR/XR) for flight training and evaluation. Currently, it supports around 15 personnel spanning from Master’s and PhD students to postdoctoral researchers.

Advancing Aerospace Research and Innovation

Their research covers a broad spectrum of critical aerospace areas, including flight dynamics, vertical lift technologies, and human-machine interaction. At the core of their work is exploring new methods to enhance aviation safety, pilot performance, and training efficiency, focusing on approaches that have never been attempted before.

Since its establishment approximately two and a half years ago, the lab has secured roughly $3 million in research funding from prestigious organizations such as the U.S. Army, U.S. Navy, National Science Foundation, Lockheed Martin, and NASA.

University of Maryland Extended Reality Flight Simulation and Control Lab

Unlocking the Full Potential of Human Senses in Flight

UMD’s Extended Reality Lab’s research focuses specifically on integrating visual feedback with diverse sensory inputs, such as haptics, active vestibular stimulation, and spatialized (3D) audio, to improve training effectiveness. The team leverages various wearable physiological sensing tools to accurately measure pilot workload during simulations and to actively manage and optimize the experience.

As pilots primarily rely on vision and their sense of equilibrium when flying, other senses, such as touch and hearing, are generally underutilized in aircraft control. “We’re investigating ways to effectively use these additional sensory inputs to enhance pilot performance and improve aviation safety, especially in off-nominal situations,” Saetti says.